Key takeaways:

- Tracking AI citations manually isn’t scalable. You need a consistent, automated view across every prompt and platform that matters from a technology like Scrunch or you're working from snapshots, not signals.

- Citation count is a starting point, not a full strategy. Tracking citation consistency and influence, identifying citation gaps, and other tactics are necessary to intelligently prioritize AI search opportunities.

- Citation ownership, commercial relevance, content quality, and more all play a role in deciding whether to secure placement in a cited source or replace it completely.

You've got questions, we've got answers.

We dug through our call transcripts, scanned our support bot logs, and polled our sales, support, and customer success teams.

These are some of the AI search citation questions that pop up again and again—and the answers you're looking for.

Is there a way to see what sources AI tools use when they give answers?

Short answer: Yes—most AI platforms surface source citations in their responses. But if you want to track them systematically across all of the prompts and platforms you care about at scale, you need a dedicated tool like Scrunch.

Longer answer: AI platforms like ChatGPT, Perplexity, and others often display the sources behind their answers inline. So in theory, you could just ask a question and see what sources get cited.

The problem is that this approach doesn’t scale.

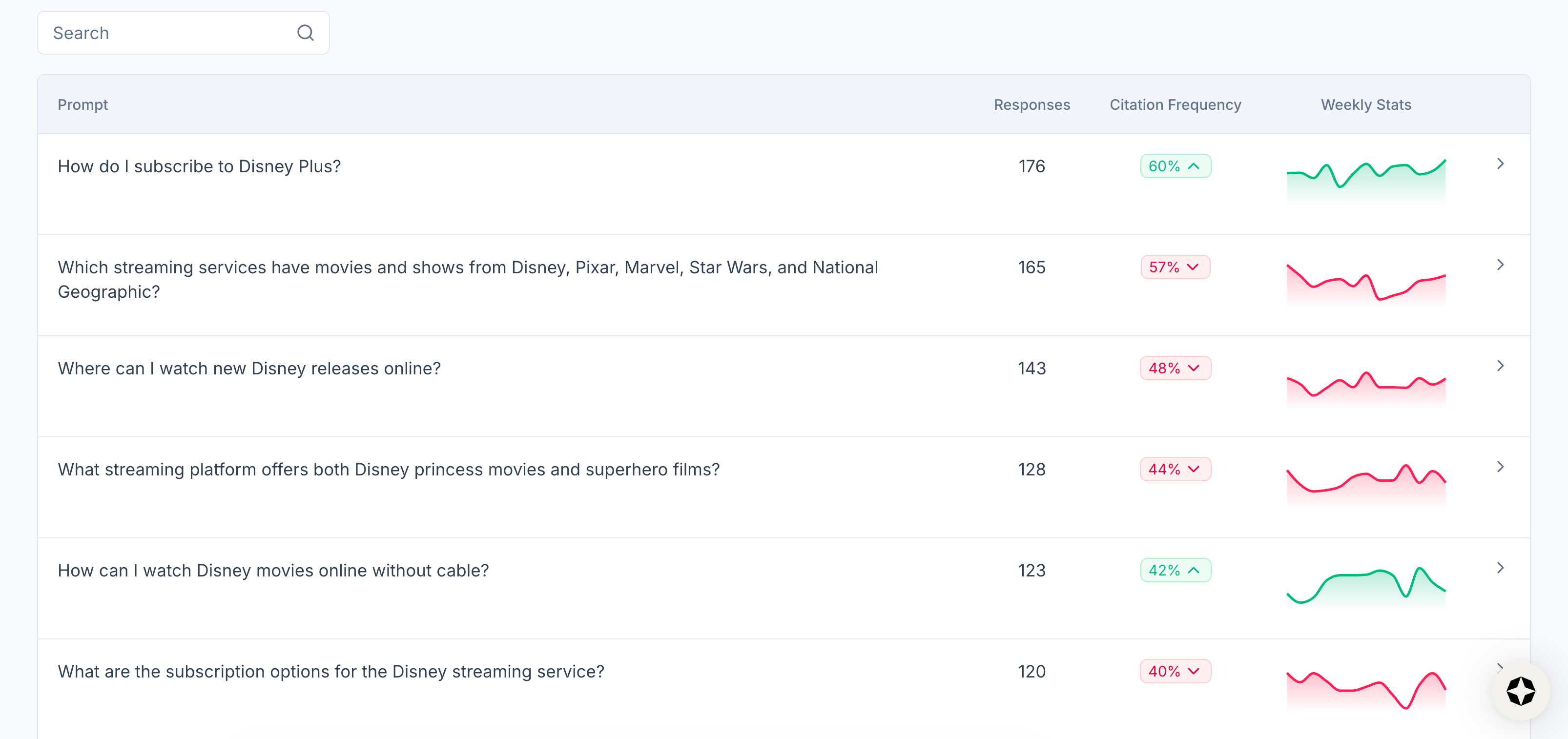

To understand AI citations in any meaningful way, you need to run the same prompts consistently across platforms, log results over time, and look for citation patterns rather than solitary snapshots.

Scrunch's monitoring product makes this easy. Every time we run your prompt set, we capture which sources AI platforms cite in their responses—yours, competitors’, or third parties’.

The result is a running record of citation behavior that reveals real patterns instead of one-off results.

How do I check which sites AI platforms are citing for answers in my category?

Short answer: Consistently run category-relevant prompts across the major AI platforms and see which sources appear most frequently using a technology like Scrunch.

Longer answer: Start with the questions your buyers actually ask when they're researching your category. Not just branded prompts about your company specifically, but also non-branded, top-of-funnel questions like, "What's the best [product category] for [use case]?"

Run those prompts across major AI platforms and note which sources AI is pulling from. You're looking for patterns: Which domains appear repeatedly? Which URLs get cited across multiple platforms? Is your brand or a competitive brand mentioned in those sources?

This tells you what’s shaping the narrative in your category: industry publications, company blogs, Reddit threads, etc.

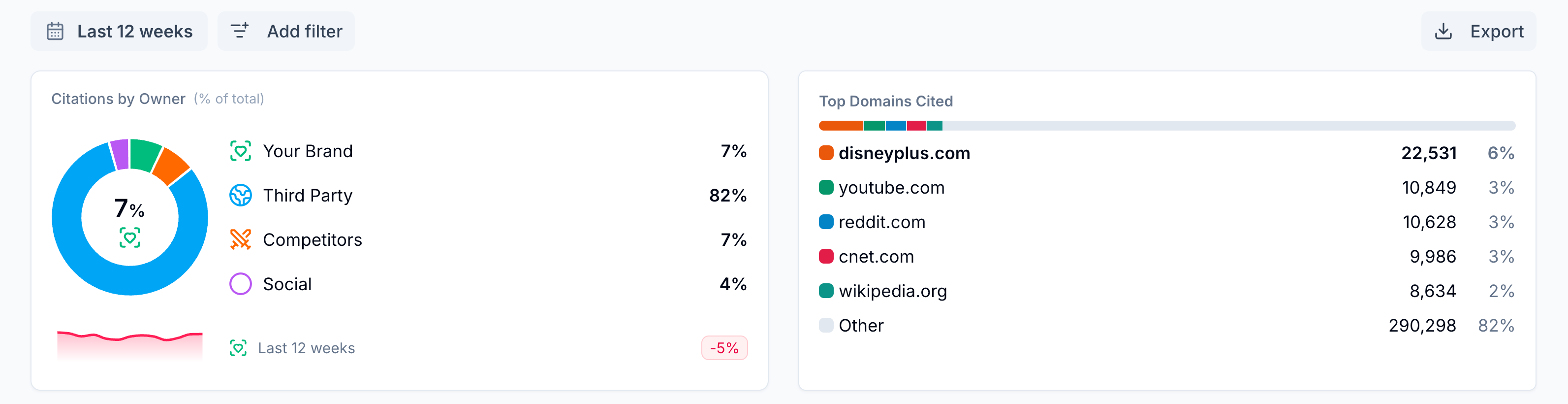

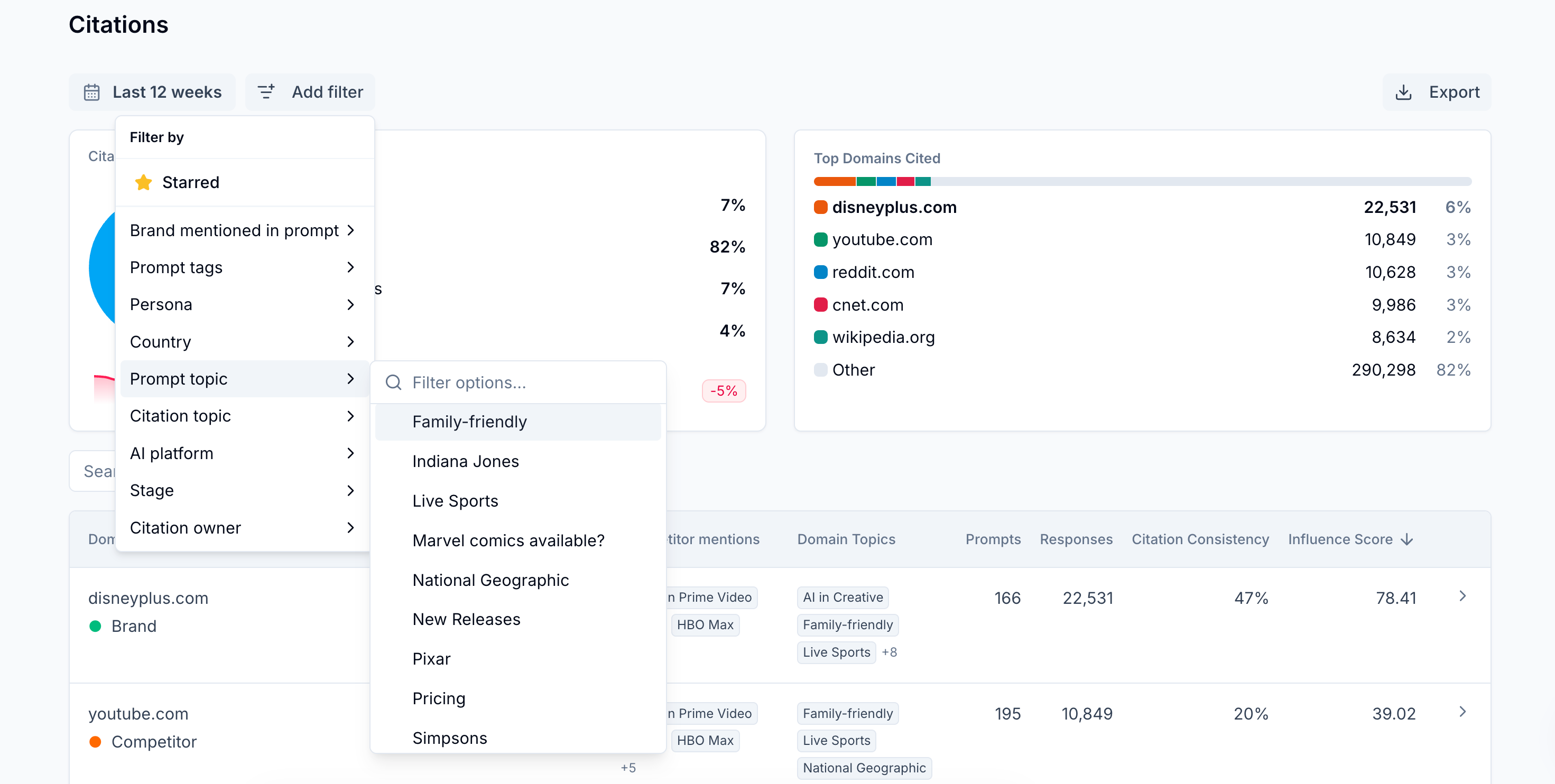

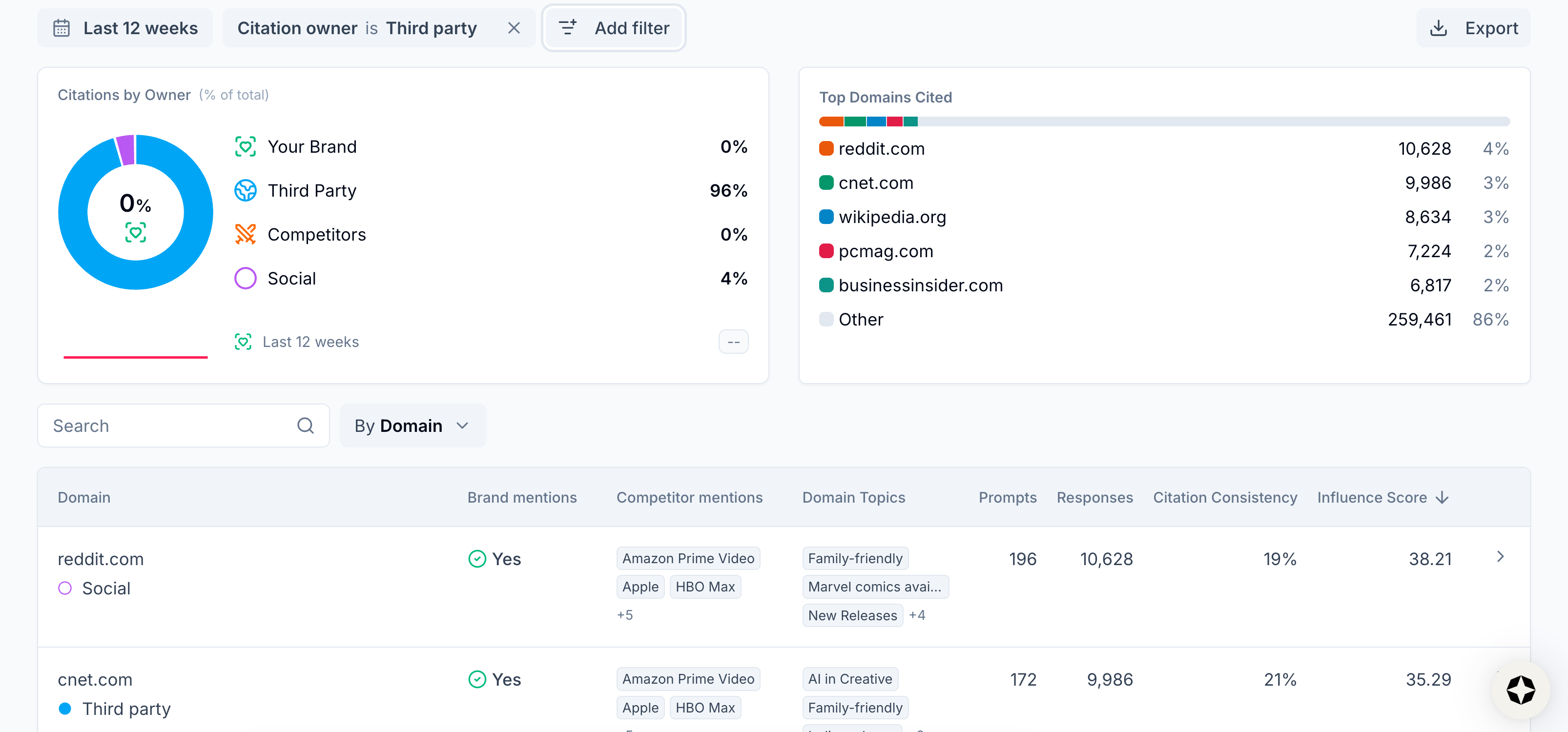

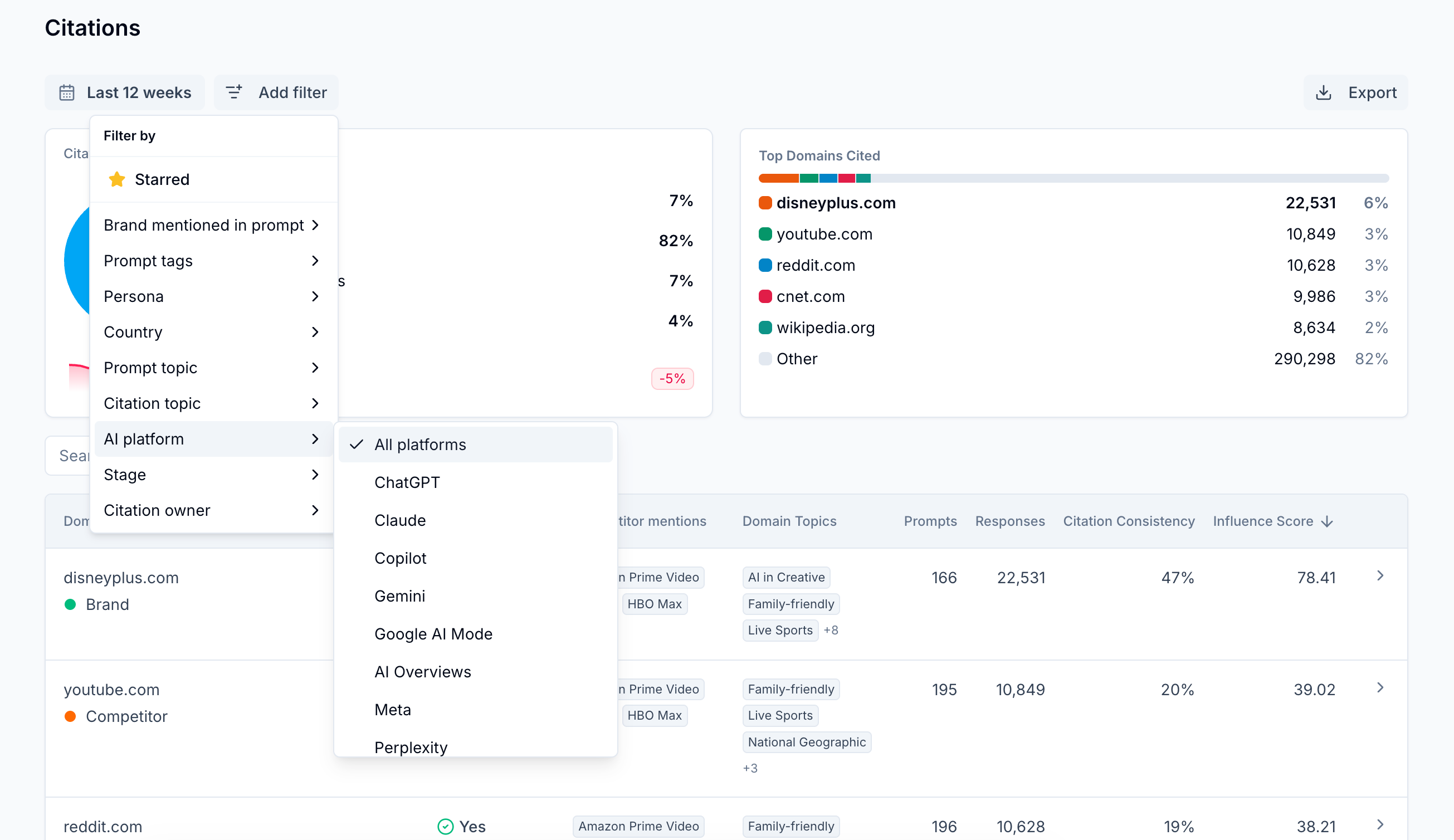

Scrunch surfaces this information at scale. Rather than manually logging citations session by session, you get a consolidated view of which sources are being referenced most frequently across your full prompt set, filterable by topic, persona, funnel stage, platform, and more.

This makes it easy to spot citation leaders in your category without building (and constantly updating) your own tracking infrastructure from scratch.

How can I check which of my pages are being referenced in AI results?

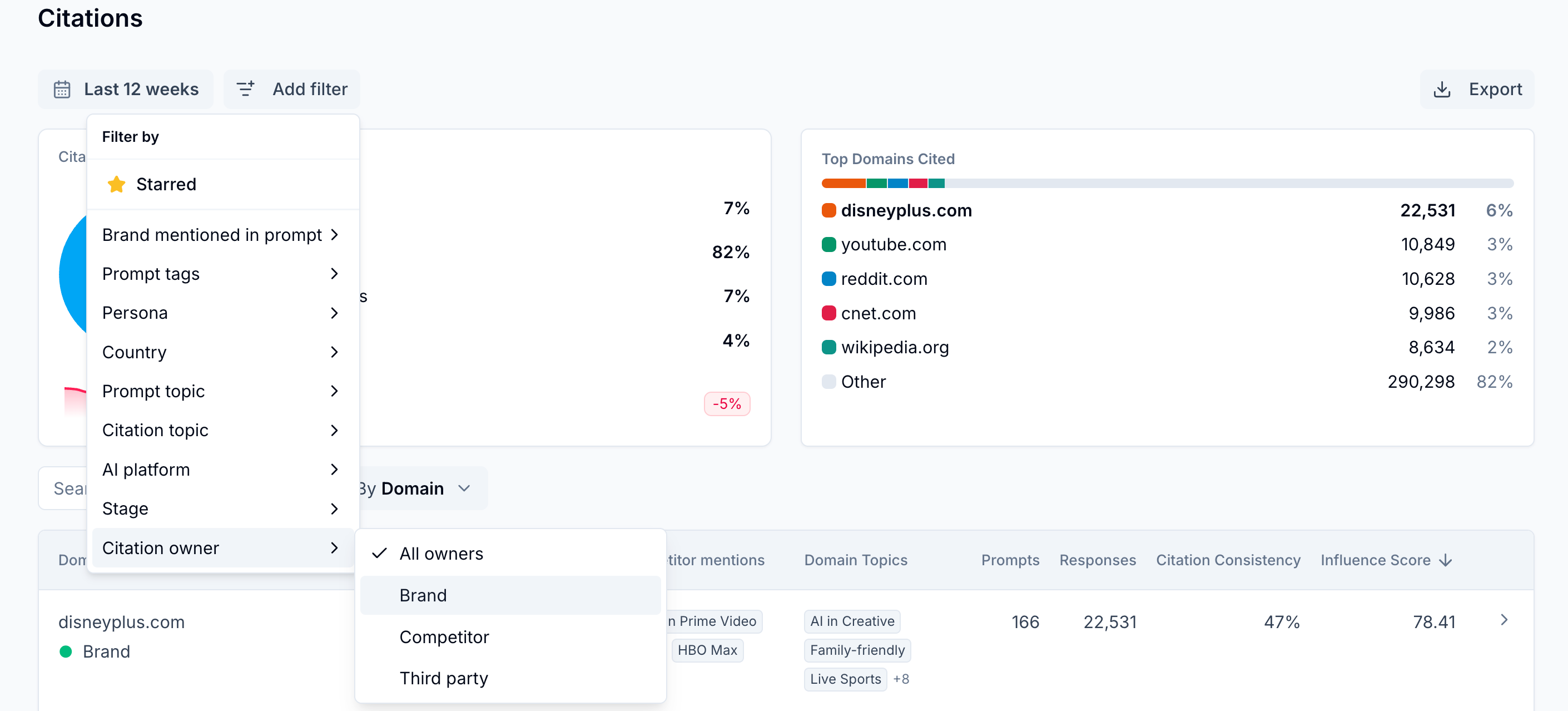

Short answer: Filter your view of citation activity across AI platforms by brand-owned citations only with a platform like Scrunch.

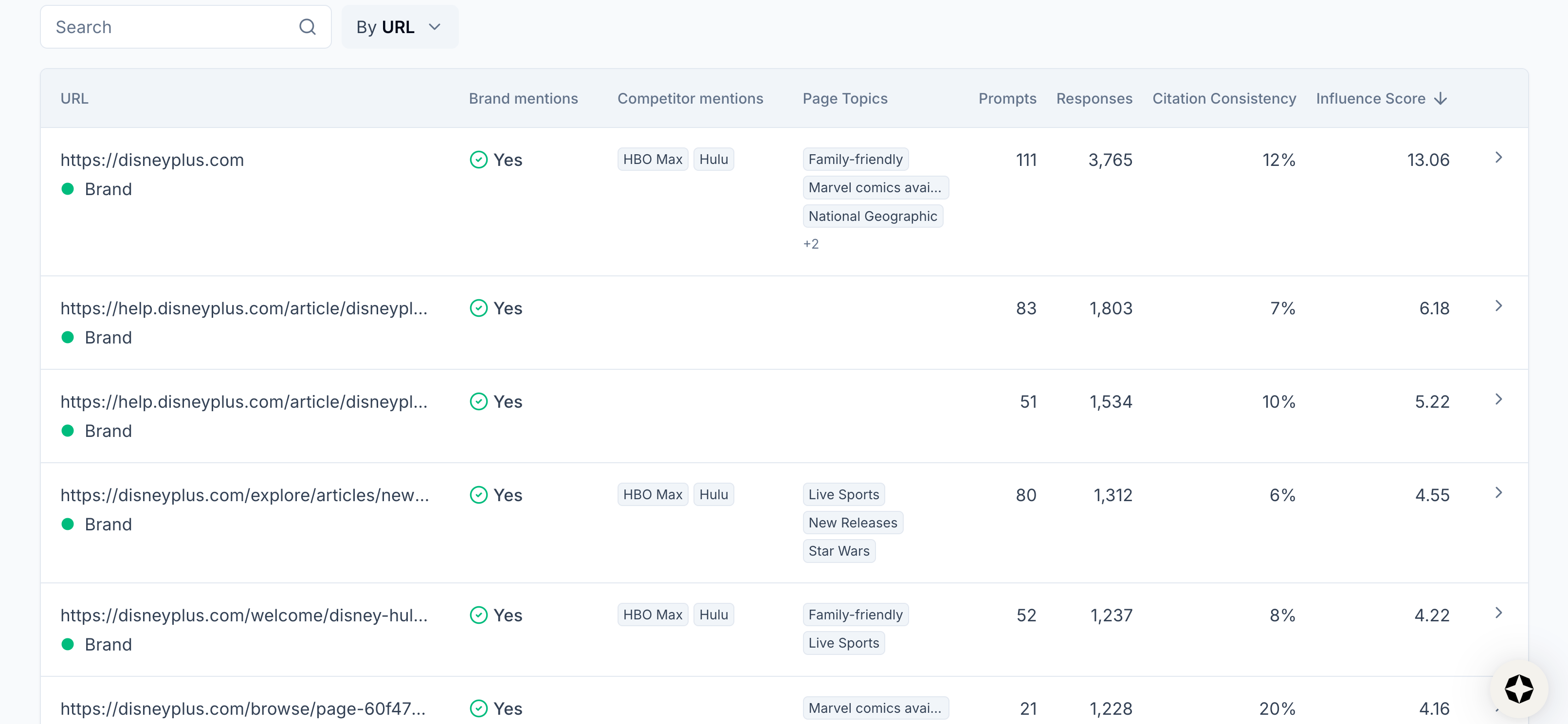

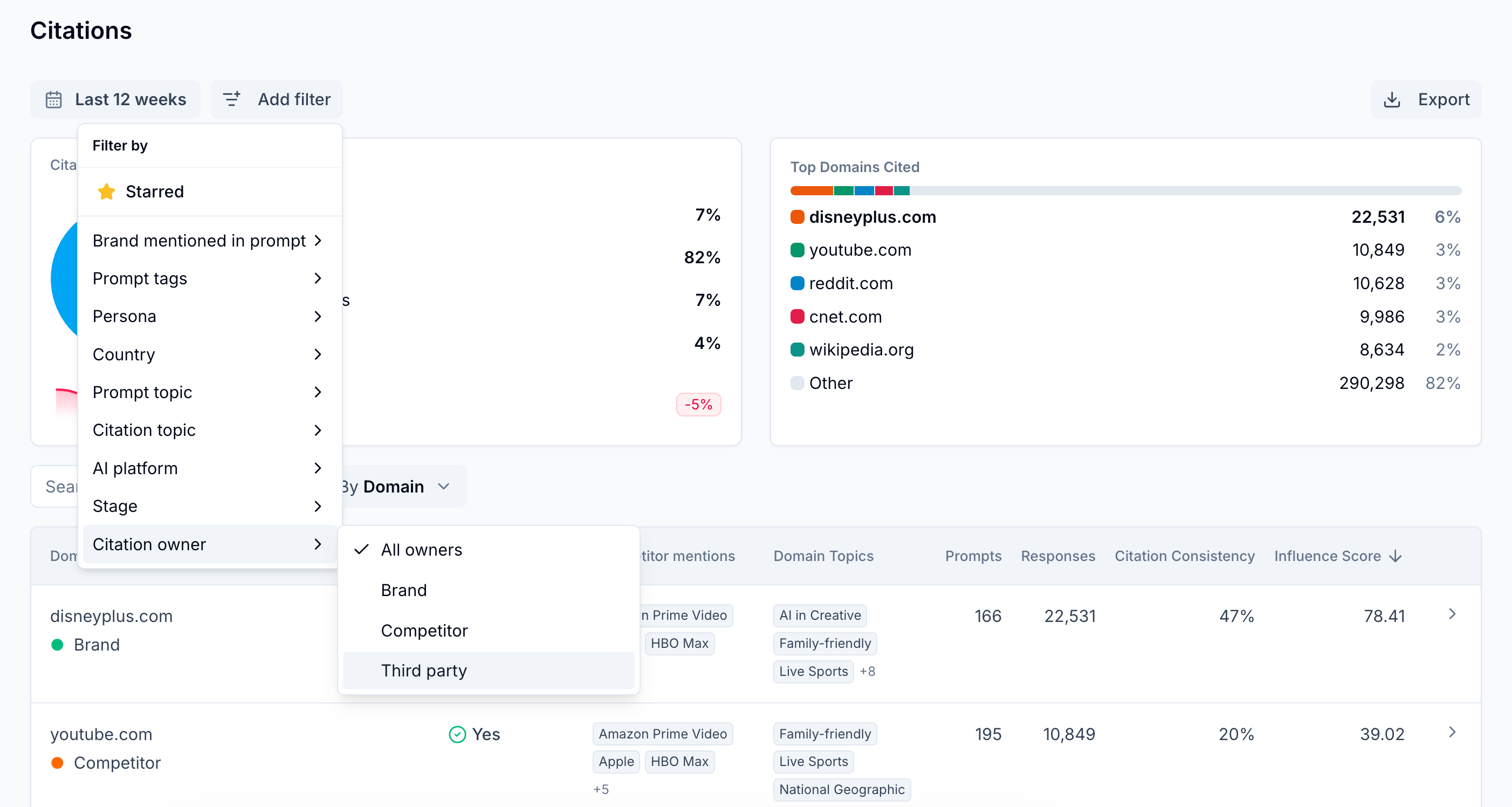

Longer answer: Citations happen at the URL level, not just the domain level.

A technical FAQ might outperform a flagship product page. A blog post from 18 months ago might be doing more work than your homepage.

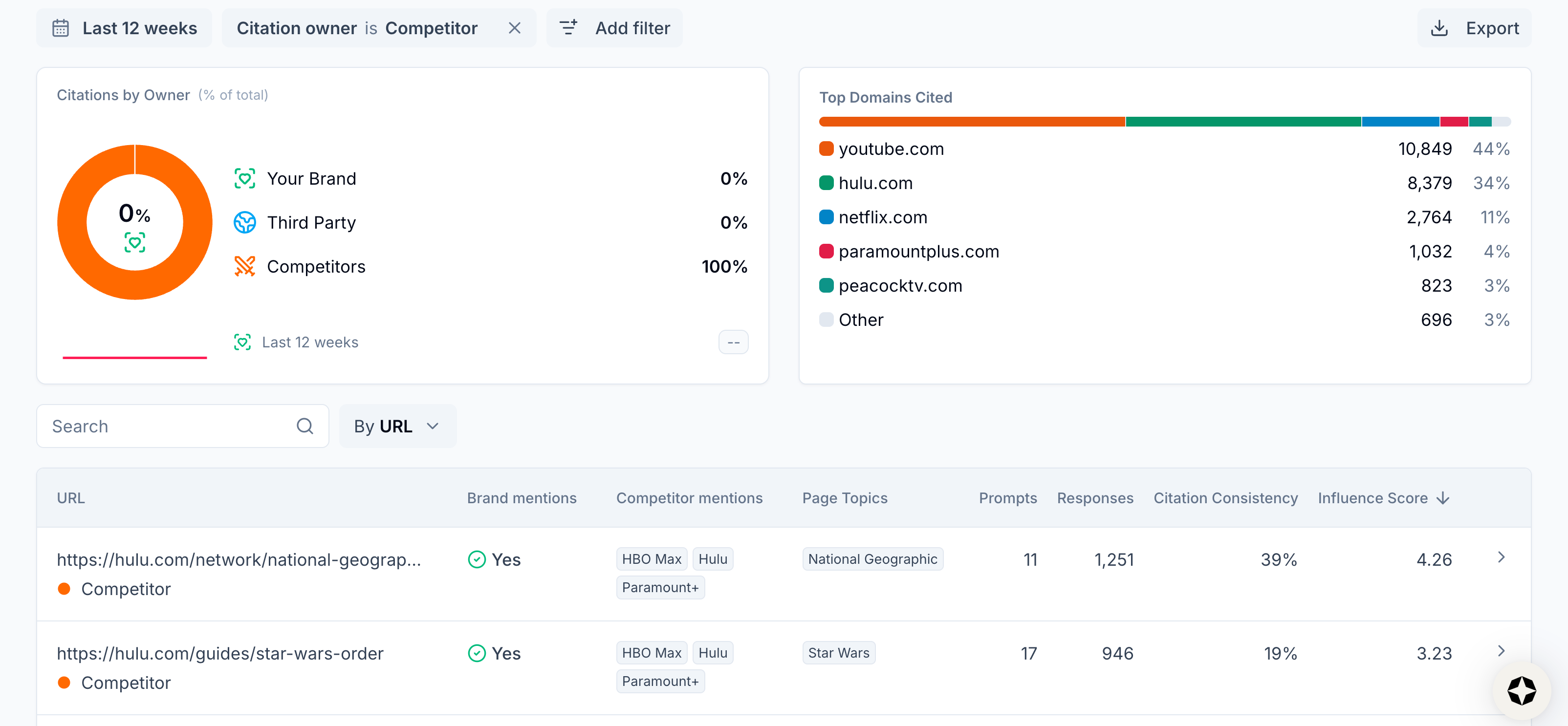

Scrunch makes it easy to understand exactly which of your pages are pulling their weight in AI search. You can filter your view of citations by ownership: brand, competitor, or third party.

Ultimately, what you really want to do is build a map: which pages AI is visiting, which it's citing, and which it's skipping entirely. Each category tells you something different.

Pages being visited but not cited usually have a fixable problem—a technical issue, a content gap, or a structural barrier that's getting in the way. Pages being cited consistently are worth protecting and building on. Pages being ignored altogether may need more fundamental attention.

Scrunch's Site Maps feature gives you exactly this view, page by page, across your entire site. Pair it with citation tracking to see which URLs are being referenced for which prompts and topics—and where the gap between AI interest and AI citation is widest.

That gap is a high-leverage opportunity list.

Is there a way to track how many times AI platforms cite my content?

Short answer: Check citation count over time and citation consistency across AI platforms—both automated in Scrunch.

Longer answer: A raw citation number is a starting point, not a full picture.

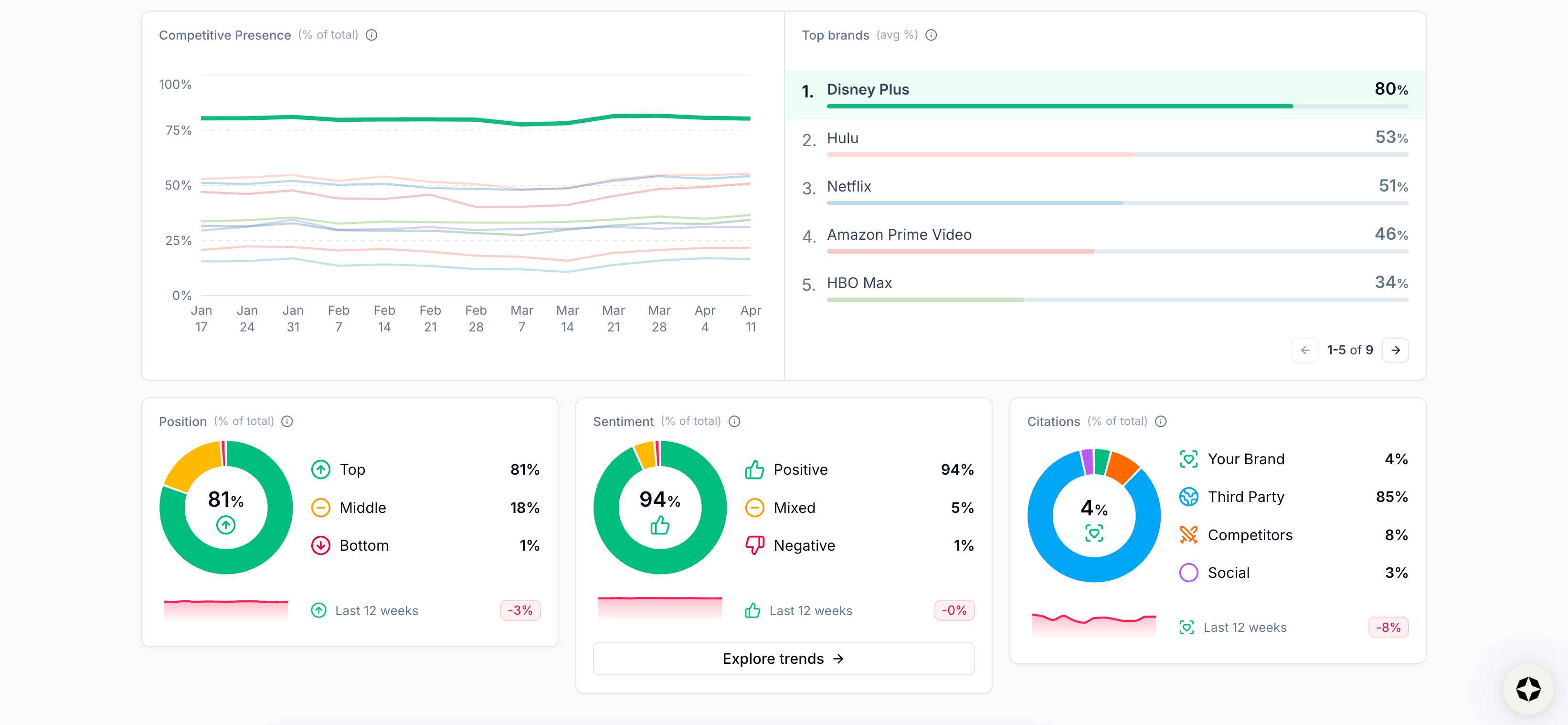

Beyond knowing the percentage of citations your brand gets for tracked prompts over different time periods, it pays to understand the unique number of prompts you’re being cited for, how many AI responses you’re cited in, and how consistently you’re being cited (this is calculated by dividing the number of responses that have cited you by the total number of responses that cited any source).

Plus, you’ll want to be able to slice and dice that data based on different dimensions—topics, AI platforms, funnel stages, etc.

All of this is served up automatically in Scrunch, at the domain and URL level.

Our advice: Don’t just focus on citation frequency—also understand which citations really move the needle in AI search. Scrunch makes this possible with its Influence Score (aka the percentage of AI responses that have cited a source multiplied by the unique number of prompts).

How can I tell how often my pages are being cited vs. ignored?

Short answer: Get a page-level view of your website that shows which pages AI is visiting, which it's citing, and which it's skipping—ready out of the box with Scrunch.

Longer answer: Getting a true bird's-eye view of citation performance across your site is harder than it sounds.

To do it manually, you'd need to map out every URL on your site, run prompts consistently across AI platforms, log which pages get cited each time, and update the whole thing on a regular basis.

It’s a recipe for stale and/or bloated spreadsheets.

Besides, the question you're really trying to answer isn't just, "How often am I cited?" It's, "For the prompts that matter to my business, which pages should be cited but aren’t?"

A page can be overlooked by AI for different reasons. It might have technical issues that make it hard for agents to crawl or parse. The content might not answer the question directly enough to earn a citation. Or a better-structured external source might be consistently winning that spot instead.

Each root cause has a different fix—and you can't find the right one until you can see the full picture.

Scrunch's Site Maps feature gives you that view automatically: which pages AI agents are visiting, which they're citing, and where the gap between the two is widest.

From there, you can quickly audit the page in question to discover where the problem is, optimize page content, and deliver it directly to LLMs in an AI-friendly format via Scrunch’s Agent Experience Platform (AXP).

How do I find third-party pages that AI keeps citing where I could get my brand included?

Short answer: Pull the most frequently cited third-party sources for your tracked prompts, filter for pages where your brand isn't mentioned, and treat those as outreach or content partnership targets using a tool like Scrunch.

Longer answer: Not every citation win requires you to build something new. Sometimes the highest-leverage move is getting mentioned on a page that AI already trusts.

If a particular URL keeps showing up in AI responses for prompts that you care about and your brand isn't on it, that’s a gap worth filling.

AI has already signaled that it finds this source authoritative. Securing a mention in it is the most direct path to influencing what AI says about your category.

Scrunch helps you identify these pages systematically rather than stumbling across them (if you’re lucky).

Citation tracking surfaces which third-party domains and URLs are appearing most frequently across your prompt set and are most influential in shaping AI responses. Cross-reference that information against pages that don't mention your brand and you have a prioritized list of outreach targets with AI-backed evidence of their impact.

This is the kind of data that makes PR and content partnership conversations a lot easier to have internally, too. Instead of, "We think this publication matters," you can say, "AI cites this page for half of our top prompts."

How do I identify which citations are the easiest to replace with better content?

Short answer: Surface the citation sources stealing your thunder with a technology like Scrunch and pinpoint sources with thin, outdated content, poor page structure, or obvious discrepancies between title, description, and page content.

Longer answer: Not all cited sources are equally entrenched. A generic listicle published three years ago that happens to get cited often is a very different target than a comprehensive, recently updated guide from an authoritative domain.

The easiest displacement opportunities typically share a few traits: The content is shallow or old. The page structure isn’t optimized for AI. And the content itself fails to deliver on the intent of the prompt or the promise of the page title and description.

Scrunch automates the process of finding which sources are cited for the prompts you care about. From there it’s a matter of comparing those sources to existing content on your site that you can update (or net new content that you can create).

The goal isn't to create content that mimics the cited source—it's to create something genuinely more useful that answers the same question with more depth and relevance, served up in the format AI prefers.

Keep in mind: Scrunch’s Deep AI Audit feature gives you a read on the technical and content quality of your own pages to help you make necessary fixes.

What criteria matter most when deciding whether to replace an existing cited source?

Short answer: Use a platform like Scrunch to understand if a citation is commercially relevant, influential, and beatable.

Longer answer: Not every citation displacement opportunity is worth chasing. Before investing in one, it's worth pressure-testing the opportunity against a few questions.

Is the prompt worth competing for? Start with commercial relevance. A citation for a prompt that maps to a bottom-of-funnel buying decision should probably be a priority. A citation for a tangential prompt with low purchase intent may not matter as much.

How much influence does the source actually have? Content that’s cited irregularly for a small number of prompts isn’t as important as content that broadly and repeatedly shapes AI responses.

Is the content beatable? Sources with sub-par or outdated content are the easiest displacement targets. Fresh, comprehensive content from high-authority domains will take more time and effort to beat.

Sometimes the smarter move is earning a mention in a cited source rather than trying to outrank it (unless the page is from a competitor, in which case replacing it is your best bet).

Scrunch’s citation tracking can help you answer whether the prompt in question is worth the effort, how influential it is, and which approach you should take based on whether it’s owned by a competitor or third party.

In general, you want to ask yourself whether the juice is worth the squeeze and whether you can credibly do better. If the answer to both questions is yes, that’s a signal to proceed.

How should teams evaluate which citation opportunities are worth pursuing first?

Short answer: Prioritize prompts that combine high purchase intent with weak incumbent sources—cross-referenced against the source’s influence—using a tool like Scrunch.

Longer answer: The instinct is to chase whichever citations feel most visible. The better move is to chase the ones most likely to drive outcomes.

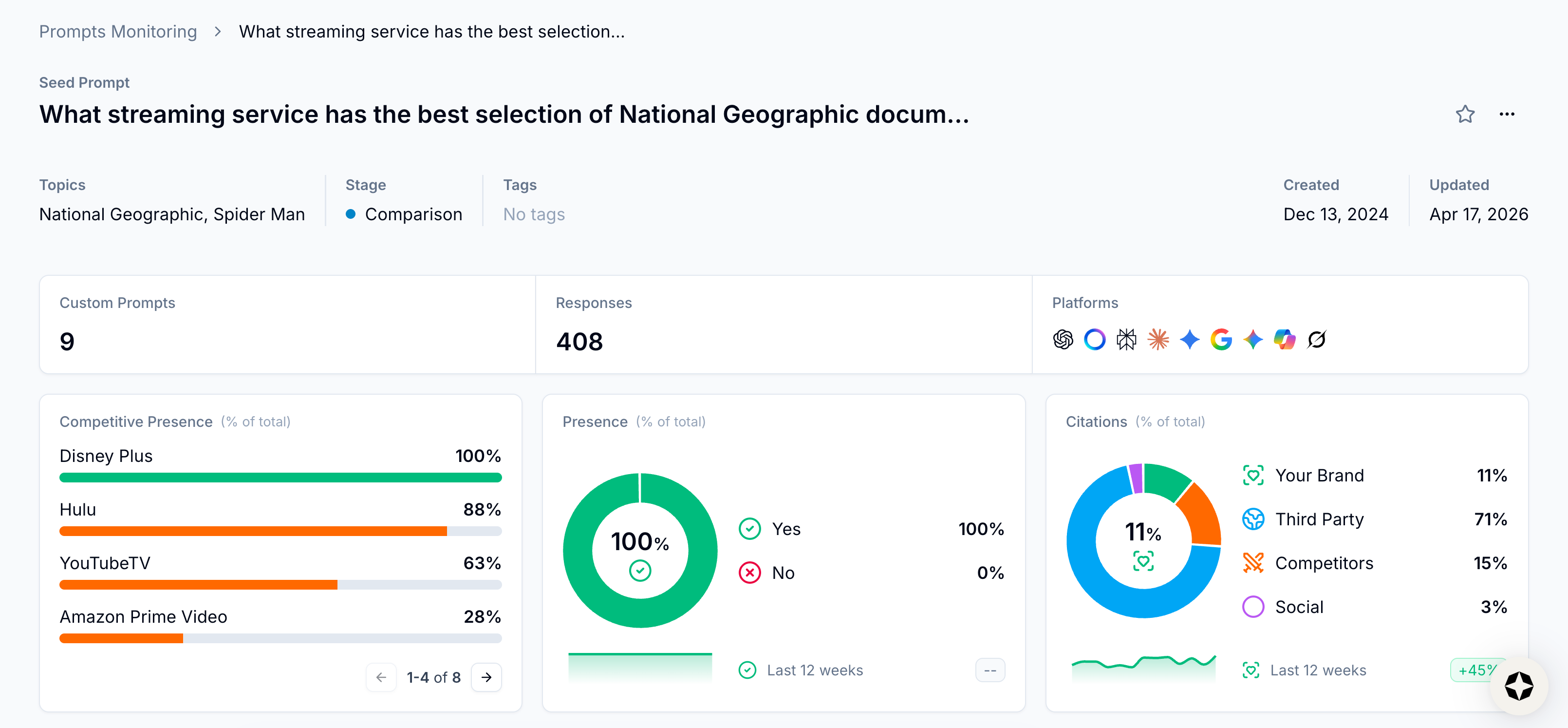

A useful way to frame it: Every citation opportunity sits somewhere on two dimensions.

The first is commercial intent—how directly does this prompt connect to a buyer in evaluation or purchase mode?

The second is displacement difficulty—how entrenched is the source currently winning that citation?

The opportunities worth pursuing first are where those two dimensions align: high-intent prompts where the incumbent content is thin, outdated, or clearly mismatched to what it's supposed to answer.

High-intent, high-difficulty opportunities are worth a longer-term content or PR investment.

Low-intent opportunities, regardless of how easy they'd be to win, probably aren't where your team's time should go right now.

What makes this prioritization possible is having the right data. Scrunch’s Influence Score—which measures citation consistency multiplied by unique prompts cited—tells you how much a source is actually shaping AI responses.

Scrunch also lets you filter citation data by topic, persona, funnel stage, AI platform, and more so you can isolate the prompts that matter most to your brand and see exactly which sources are winning them.

From there, prioritization becomes a lot less subjective.

Can Scrunch reliably show which third-party sites are winning citations over my brand?

Short answer: Yes, competitive citation tracking is one of the most used features in Scrunch—and it runs on the same prompt set as your brand, so you're always comparing apples to apples.

Longer answer: The key word in that question is "reliably." Anyone can manually check a few prompts and see which URLs get cited. The challenge is doing it consistently, across enough prompts to be statistically meaningful, and with enough frequency to spot trends rather than noise.

Scrunch tracks citation data for your brand, competitors, and third parties across the same prompt set simultaneously. So when you look at competitor or third-party citation performance, you're not comparing results from different sessions, different prompt phrasings, or different time windows. You're looking at a controlled comparison.

This matters because it's how you distinguish a real gap from a random variation. If a competitor’s or third party's content is getting cited more often than yours across a stable prompt set tracked over several weeks, that's a signal.

We frequently hear from customers that this competitive citation view is what helps make the business case for AEO internally.

How well does Scrunch handle citation tracking across different AI models?

Short answer: Scrunch reliably tracks citations across all major AI platforms simultaneously so you can see not just where you're cited, but whether citation patterns differ by platform.

Longer answer: Citation behavior can vary meaningfully across platforms. ChatGPT, Perplexity, Gemini, and others don't all draw from the exact same sources or weight them the same way.

A page that consistently gets cited by one AI platform might be invisible in another—and vice versa. That variation matters for your strategy.

Scrunch runs your prompt set across all major platforms and captures citation data separately for each. This lets you filter citation results by platform to spot exactly where your coverage is strong and where you have work to do.

Our advice: Start with ChatGPT and Google AI Overviews given their reach, but don't assume that their citation behavior predicts what you'll see elsewhere.

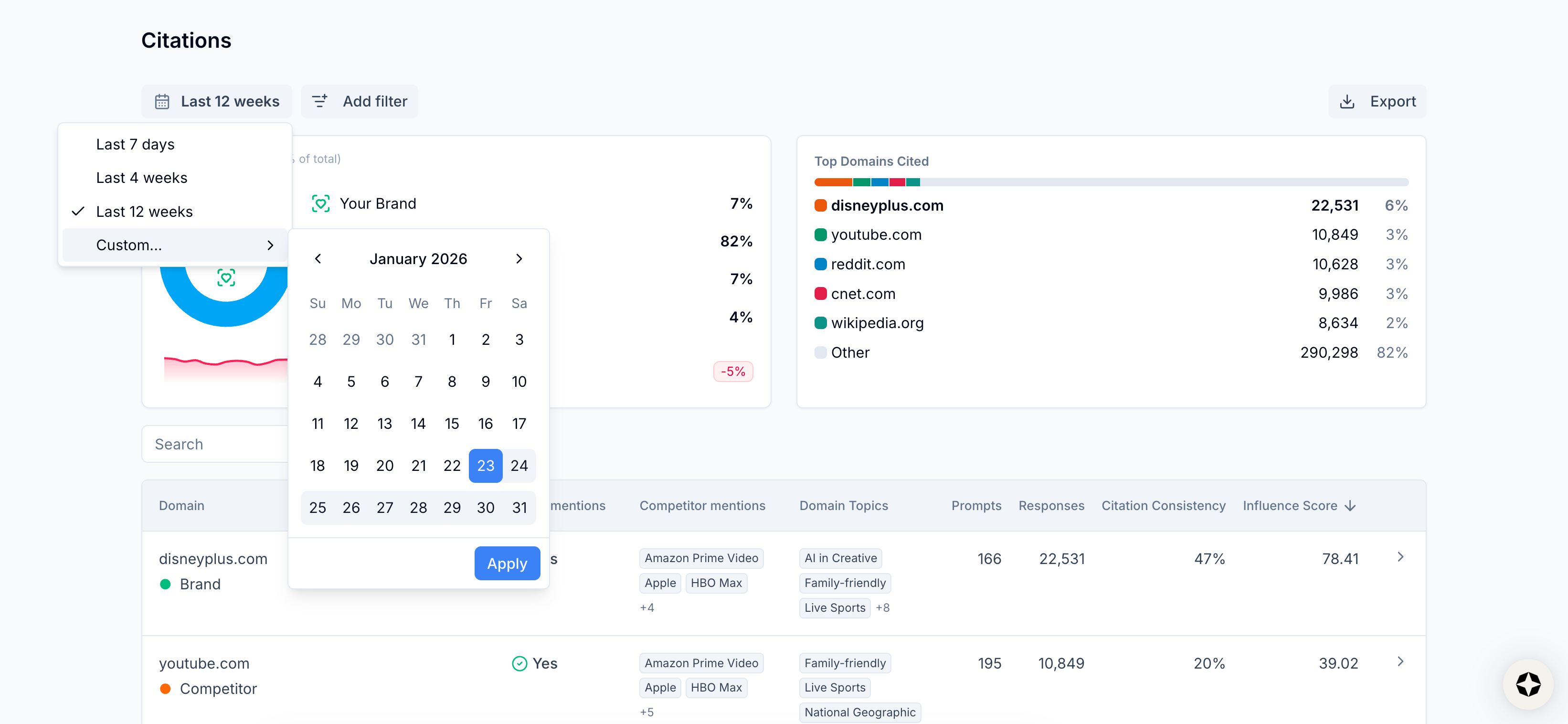

Can Scrunch show historical citation trends to prove progress over time?

Short answer: Yes, Scrunch makes it easy to analyze historical citation trends to help you report on AI search gains over time.

Longer answer: AI search performance can fluctuate for reasons outside of your control—model updates, shifts in the news cycle, platform changes.

This can make it hard for teams to demonstrate that the work they're doing is actually moving the right metrics. All the more reason to consistently measure a stable prompt set over time in order to prove progress.

When you update a page and citation volume climbs over the following weeks, that's not a fluke—it’s a result. When you secure a mention in a third-party source and citations from that domain start appearing in favorable AI answers, that’s a traceable outcome you can point to.

Scrunch tracks citations (and every other important KPI) as fully filterable time-series data. So when something moves, you can see what changed, when it changed, and how your position shifted relative to competitors—not just in aggregate, but for the specific topics, audiences, funnel stages, and AI platforms that matter to your business.

This isn't every question our team fields about AI search citations, but it covers a lot of conversational ground.

Got more questions? See our FAQs.

Want to dig deeper? Check out our AI search guide.

Ready to see for yourself? Get in touch or take Scrunch for a test drive.

Get started with Scrunch

Start reaching more customers on AI platforms. Spin up a free account or see Scrunch in action today.