Key takeaways:

- You can check if your brand (or a competitor’s brand) appears in AI answers manually—but you need a technology like Scrunch to do it consistently and scalably across every prompt and platform that matters.

- Brand presence tells you if you're in the room. Share of voice, citations, bot traffic, referral traffic, and other KPIs help you turn metrics into actionable learnings.

- The brands winning AI search are building stable prompt libraries organized by topic, persona, and funnel stage to measure what changes over time.

You’ve got questions, we’ve got answers.

We dug through our call transcripts, scanned our support bot logs, and polled our sales, support, and customer success teams.

These are some of the AI search monitoring questions that pop up again and again—and the answers you’re looking for.

How do I know if my brand shows up in ChatGPT answers?

Short answer: You can check manually by asking ChatGPT directly, but that only tells you what's true right now, in that session, for that particular prompt. Consistent, scalable brand monitoring requires a dedicated tool like Scrunch.

Longer answer: Technically, you don't need anything special to know whether your brand is showing up in AI answers. You can just ask ChatGPT—or Perplexity, or Gemini, or Claude, or whatever—directly.

But the limitations become obvious quickly. AI responses vary from session to session, so a single check doesn't tell you whether you're appearing consistently. There's no easy way to compare results across platforms without running the whole process over again, every time.

And if you're tracking more than a handful of prompts, the manual approach falls apart fast.

The real question is: How do you do this across every topic, prompt, and platform you care about without losing your mind?

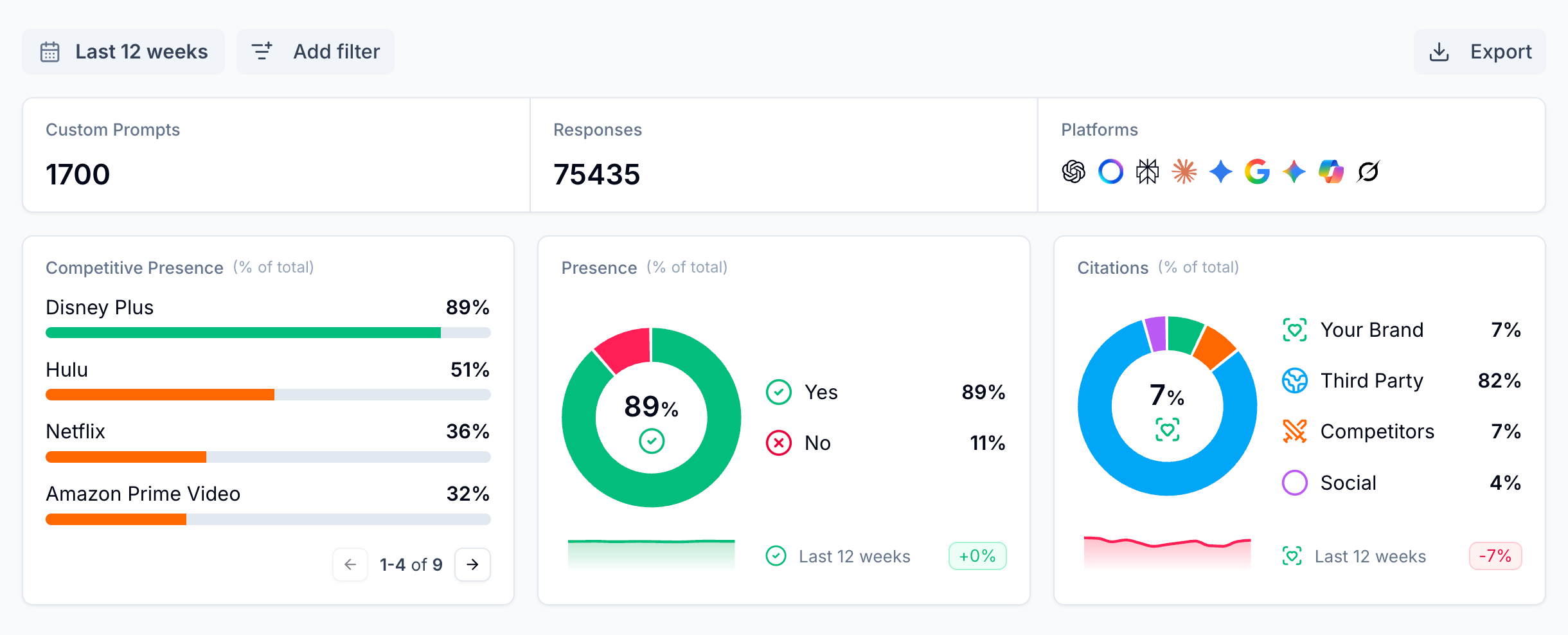

That's exactly what Scrunch's monitoring product is built for. You define the prompts that matter to your business—or let Scrunch generate them for you—and we run them consistently across every major AI platform.

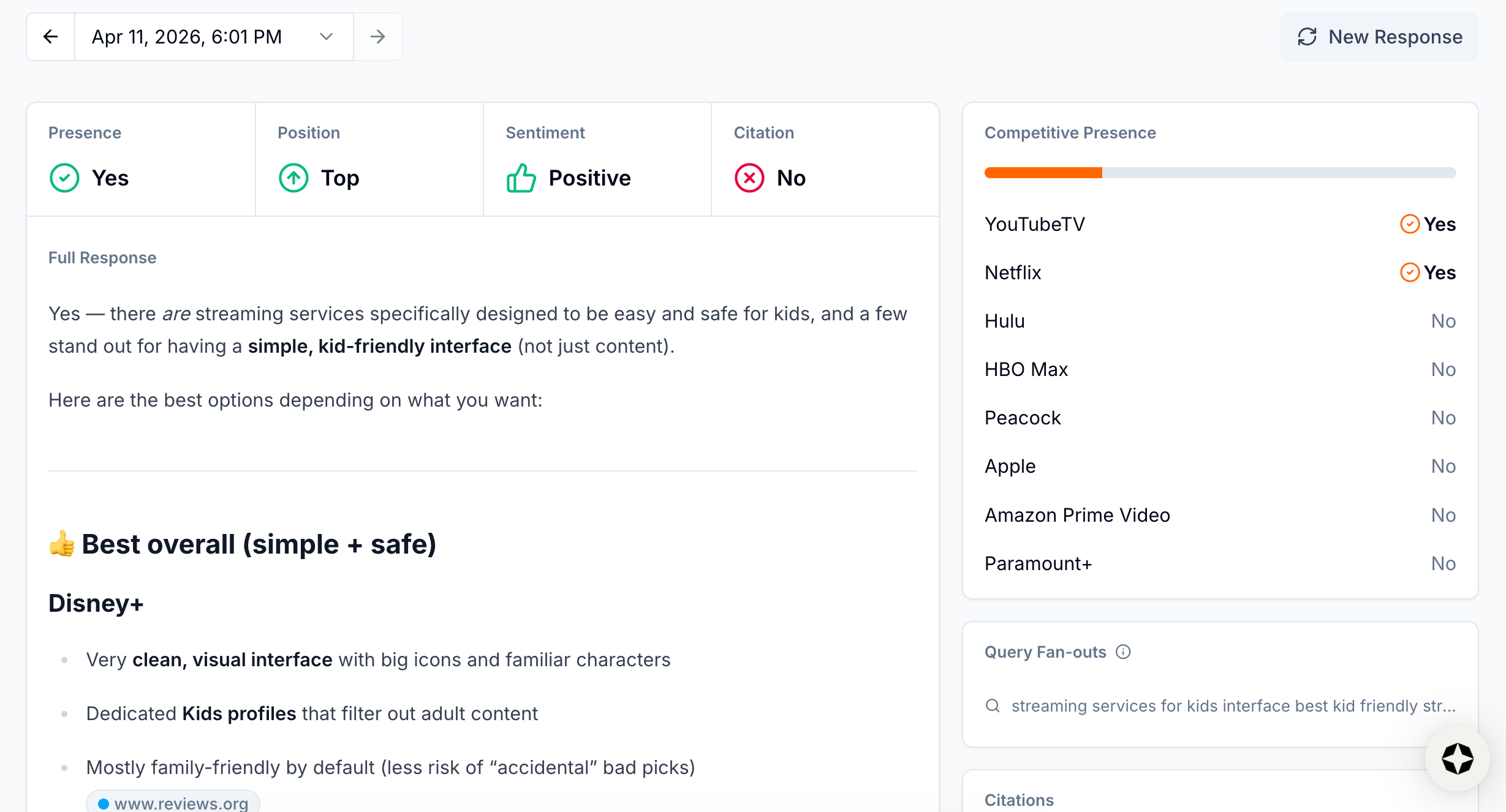

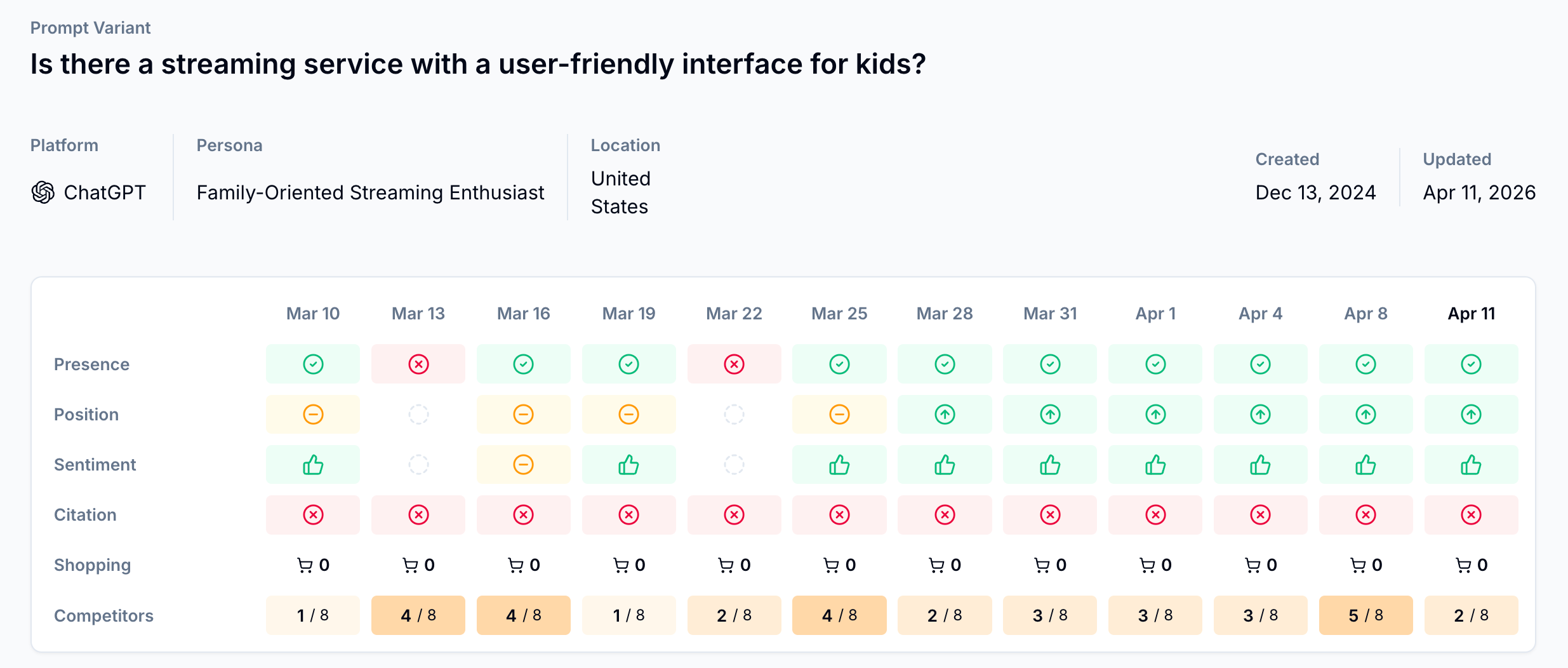

Scrunch tracks whether your brand appears, where it appears, how it's described, and which sources AI platforms are pulling from when they talk about you.

The result isn't a one-time snapshot. It's a living record of how your brand is represented in AI over time.

Data is updated continuously so you can spot trends, catch drops, and measure the impact of changes you make, as well as filterable by topic, persona, funnel stage, country, platform, custom tag, and more.

Related: There’s a difference between your brand and your products. Scrunch also offers complete visibility into which of your products are showing up in AI search results, how they compare to competitors’ products, which retailers are capturing clicks, and which prompts are driving the results as part of its Shopping feature.

How do I measure which prompts or topics I'm getting visibility for in AI?

Short answer: Build a prompt library that mirrors how your customers think, organize it into topic clusters, and track it consistently over time with a technology like Scrunch.

Longer answer: Use keyword research to identify high-volume query patterns in your category and understand how your customers talk.

What language do they use to describe their problem? What comparisons are they making? What outcomes are they searching for?

Supplement keyword data with real-world customer research. Sales call recordings, support tickets, and customer interviews are goldmines here.

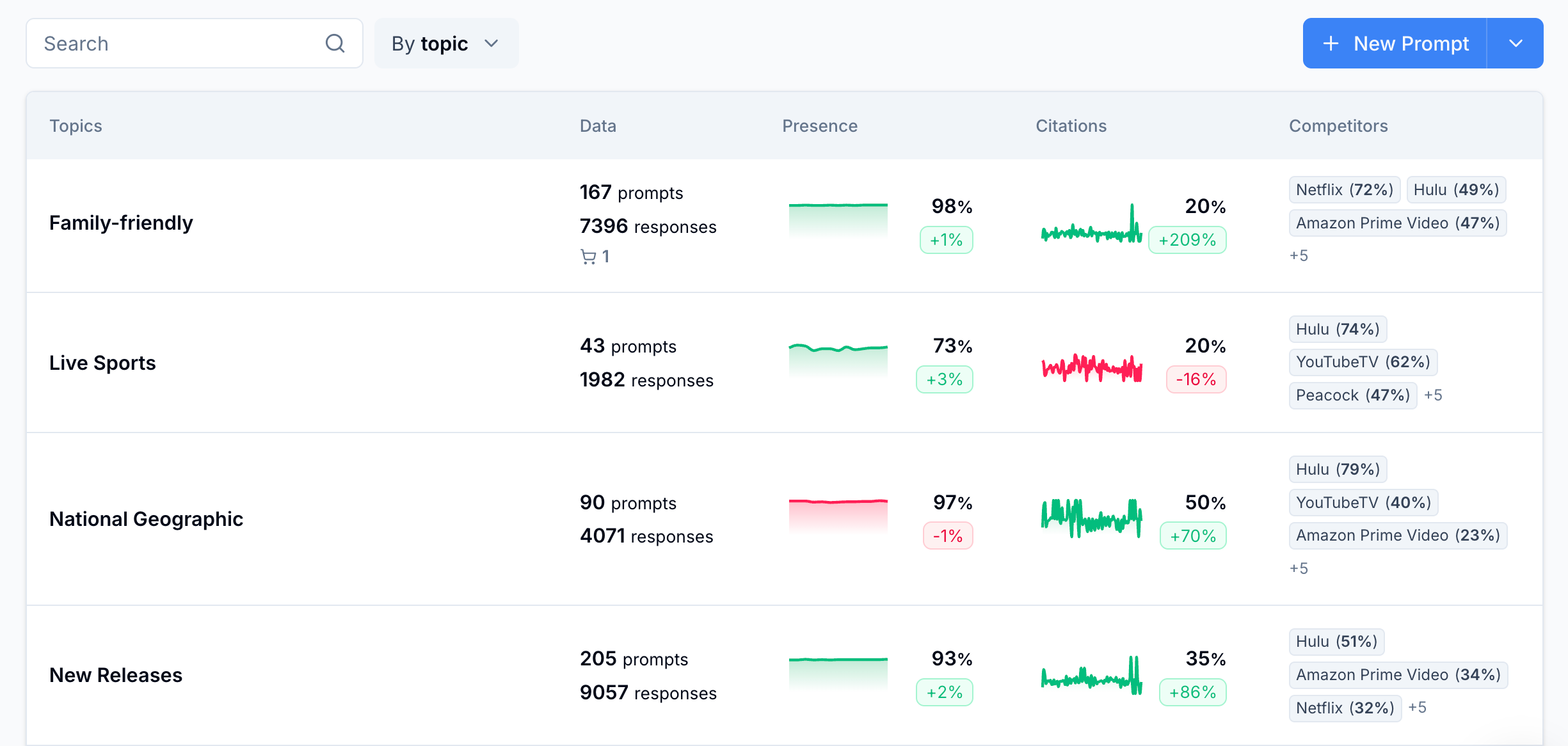

Once you've got your prompts, organize them into high-level topics. That structure makes it easy to see where you're winning and where you're invisible at a high level.

Run those prompts consistently and log your results. Over time, patterns emerge: prompts and topics where you show up reliably, others where you're consistently absent despite strong buyer intent. That gap is your opportunity.

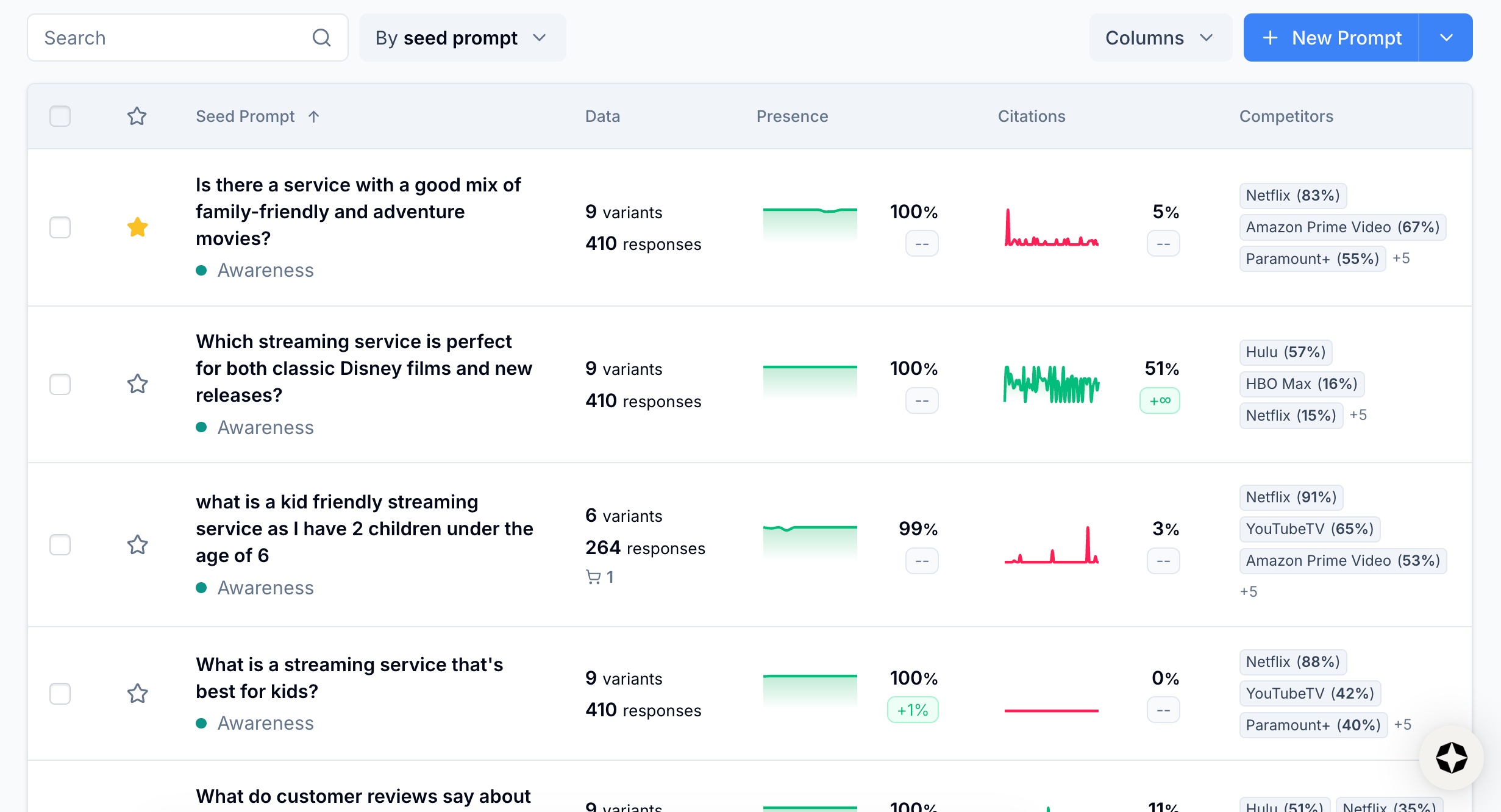

Scrunch is built around this exact approach. Rather than tracking keywords—the SEO paradigm—Scrunch treats prompts as the primary unit of measurement. You can organize prompts by topic, tag them by persona, funnel stage, country, or custom dimension, and filter results to see exactly where you're getting visibility and where you're not.

Customers consistently call out the value of being able to slice data based on the prompts that actually matter for a specific campaign, audience segment, or product line rather than staring at a wall of data.

What's the baseline set of metrics companies are using to monitor AI search?

Short answer: Four metrics form the foundation of any serious AI search monitoring program: brand presence, citations, AI agent traffic, and AI referral traffic—all trackable using Scrunch.

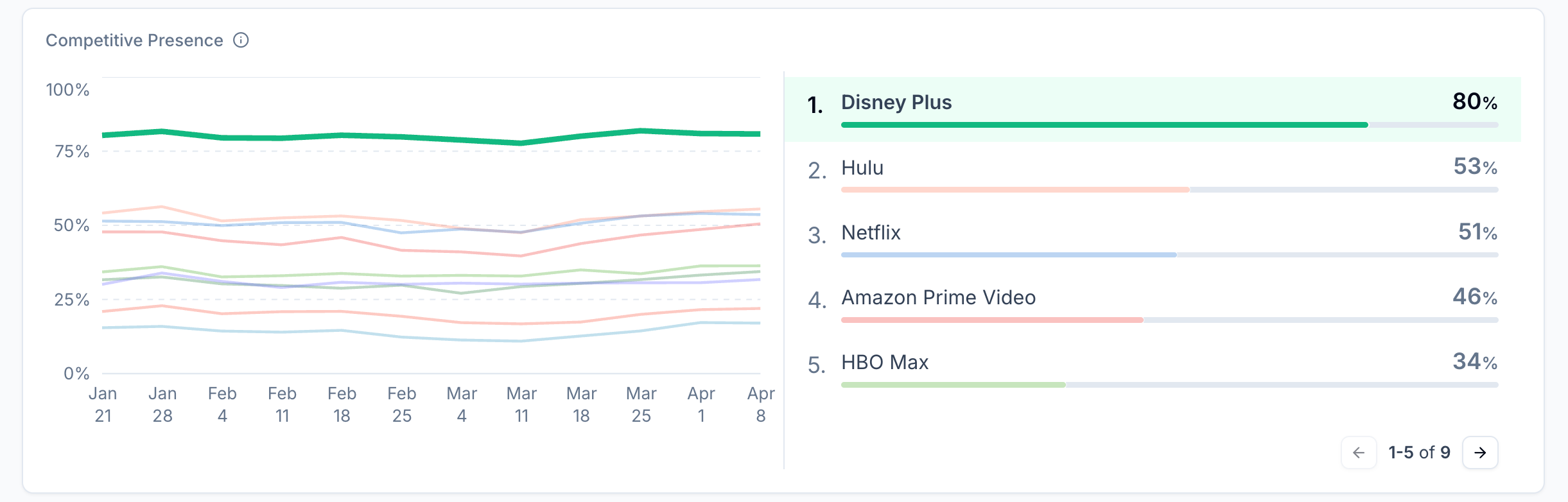

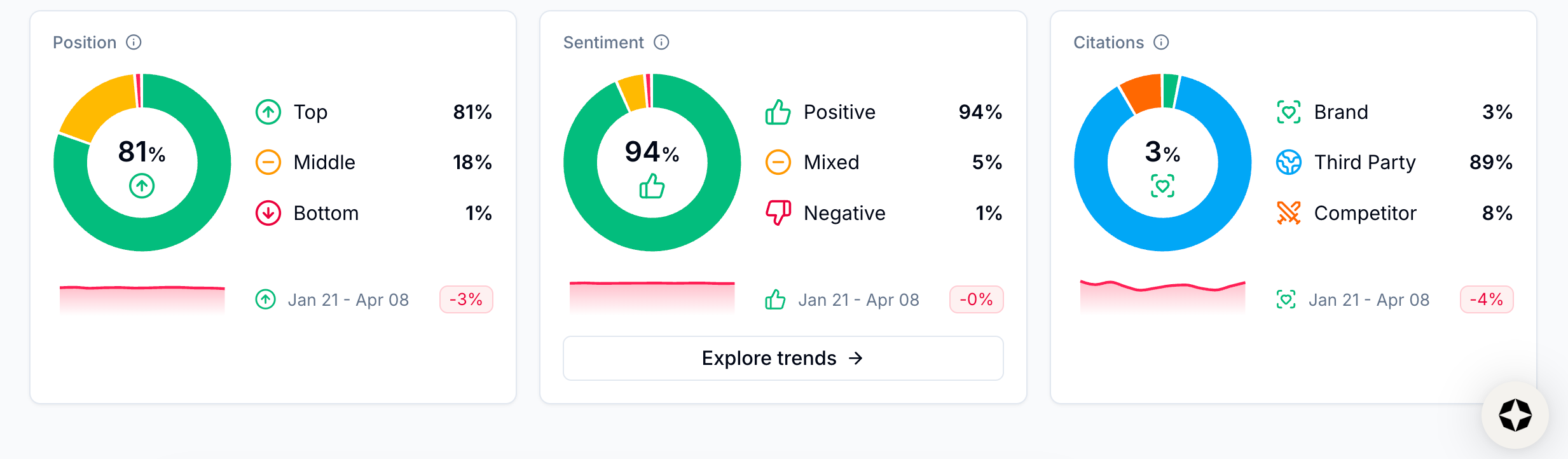

Longer answer: Brand presence is whether your brand is mentioned in response to a given prompt. Tracked across a consistent prompt set over time, presence rate is your baseline visibility metric—the cornerstone everything else is built on. Within brand presence you can also zero in on answer position, answer sentiment, and share of voice (i.e., how your presence compares to competitors).

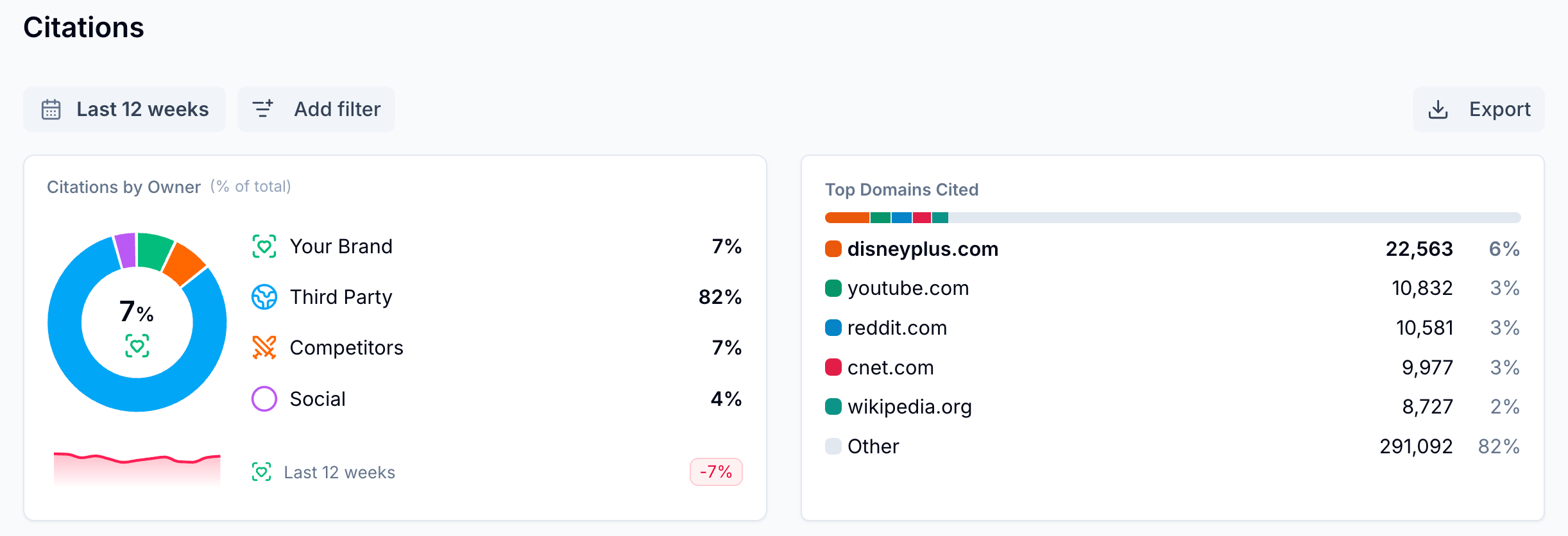

Citations reveal which sources AI is drawing on when it talks about you. This is one of the most actionable metrics because it tells you which content is shaping the AI narrative. Change the sources and you change the narrative.

AI agent traffic tells you when AI is visiting your site and why. When an AI retrieval bot crawls one of your pages, it means a real person just prompted an AI about you or your category. That's high-intent behavior happening upstream of a click. Tracked over time, agent traffic reveals which pages AI finds useful, which platforms are visiting most often, and how activity maps to buyer behavior.

AI referral traffic is what happens on the other end: A user reads an AI response that cites your brand, clicks through, and lands on your site. These visitors are fewer and far between (AI search is predominantly a zero-click channel), but convert at a higher rate than traditional organic traffic because they've already done most of their buying research inside the AI interface. AI referral data closes the loop between your AI presence and real business outcomes.

Scrunch puts all of this data at your disposal with built-in tools to help you separate actionable signals from noise.

How do teams compare AI visibility across different models and platforms?

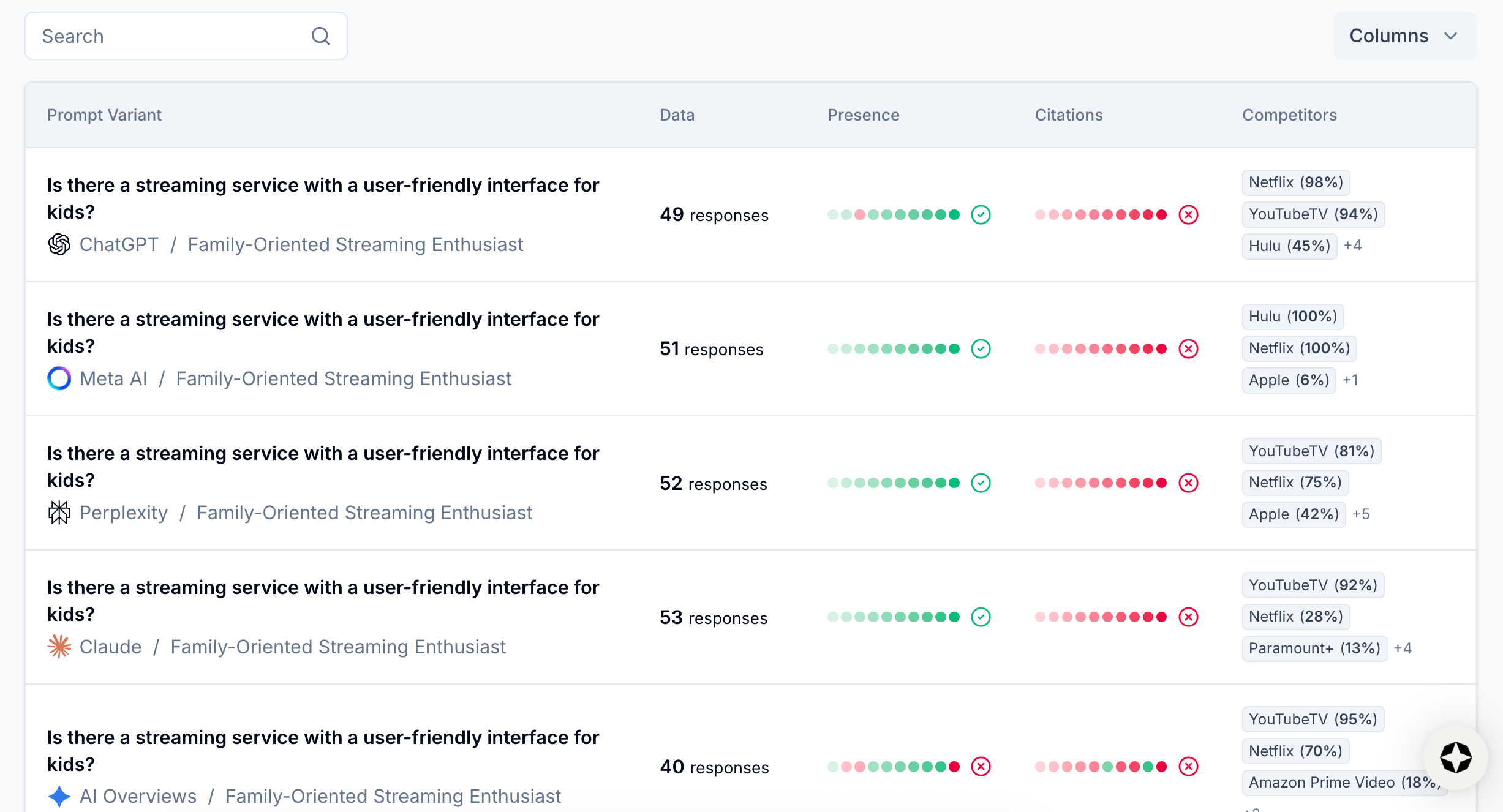

Short answer: Teams that are serious about cross-platform visibility typically track the same set of prompts across multiple platforms, then filter by platform to spot where performance diverges using a tool like Scrunch. We recommend starting with ChatGPT and Google AI Overviews at a minimum because they have the highest reach.

Longer answer: The manual approach: Pick your most important prompts, run them in each platform, and build a comparison matrix. Even a basic spreadsheet—prompt, platform, brand mentioned, competitor mentioned, etc.—gives you a useful cross-platform starting point.

The downside is scale. Response variance means single runs can be misleading, and doing this manually across multiple platforms every week isn't sustainable for any team.

Scrunch builds cross-platform comparison into its product from the start. You set up your prompts once and we run them across all major AI platforms simultaneously. Instead of manually stitching together results, you get a single view that can be filtered by platform.

This makes it easy to quickly answer questions like, "Are we underperforming on Perplexity specifically?" or "Is our visibility gap wider on Gemini than ChatGPT?"

That kind of platform-level breakdown matters more as different AI platforms capture different buyer segments.

Our advice: Go multi-model and then let the data tell you where your category is most active, not just where you assume it is.

What benchmarks should I use to evaluate AI search performance?

Short answer: Build your own baseline first, then measure against competitors using a tool like Scrunch. AI search is too new and too variable by category for industry-wide standards to be reliable.

Longer answer: Before you make any changes to your content or site, run your prompts and record your starting numbers. That becomes your control state. Every experiment you run afterward is measured against that baseline—not against some external benchmark that may not reflect your category.

Once you have a baseline, compare your performance against specific competitors for the same prompts. A 30% brand presence rate sounds arbitrary in isolation. But if your two main competitors are sitting at 40% and 55% for the same topics, that tells you exactly where the gap is and how big it is.

Scrunch builds competitive benchmarking directly into its monitoring workflow. You set up your competitors alongside your own brand and Scrunch tracks all of them across the same prompts so you're always looking at relative performance, not a number without context.

How can I compare my AI visibility against competitors without relying on guesswork?

Short answer: Run the same prompts against the same AI platforms for your brand and your competitors in a platform like Scrunch. Consistent, simultaneous tracking is the only way to get a reliable read.

Longer answer: The manual approach works (kind of) at a smaller scale: Pick your most important prompts, run them in each platform you care about, and build a comparison matrix. Logging prompt, platform, brand mentioned (yes/no), competitors mentioned, etc. gives you a high-level picture.

The problem is volume and consistency. Say you're tracking 100 prompts across five AI platforms. That's 500 individual queries, and because AI responses vary from session to session, you'd want to run each multiple times to get a reliable signal. Doing that manually every week isn't realistic.

Scrunch makes competitive comparison a first-class feature. You define which competitors you want to track and Scrunch runs them on the same prompt set as your brand. So instead of manually cobbling together results, you get a single view with topic, persona, funnel stage, AI platform, and more as filter dimensions.

Customers frequently use this to build an internal business case—showing leadership not just their own visibility numbers, but exactly where competitors are outperforming them and for which prompts. That competitive framing tends to make the stakes a lot clearer.

How many prompts should I be tracking?

Short answer: Use this heuristic as your starting point: X [# of topic clusters] × Y [12-15 questions per cluster] = Z [# of prompts to track]. The goal is a representative sampling of data you can track consistently over time using a tool like Scrunch.

Longer answer: The approach works like this: Start with your SEO keywords, paid search keywords, and major product and content categories. Sort them into topic clusters based on common themes—product use cases, competitor comparisons, industry questions, and so on.

Each cluster typically has somewhere between 5-15 keywords associated with it. Then write 12-15 questions for each cluster that span the full customer journey, from awareness to evaluation to comparison, and include both branded and non-branded variations.

Multiply clusters by questions and you have your estimated prompt count.

Once you have enough data—Scrunch recommends at least 2 weeks—identify which prompts are pulling their weight and cut the ones that are redundant (Scrunch’s Topic Prompt Optimizations feature makes this easy).

Think of your prompt set like a network of weather stations. Meteorologists don't measure the temperature of every square foot of a city—they place sensors at consistent locations and read them over time. One data point tells you very little. A stable network, read consistently, reveals actual patterns.

Start broad, then prune. You can get solid coverage with fewer prompts than you might expect.

How do I track whether improvements I make are actually increasing my AI presence?

Short answer: Establish a clean baseline using a tool like Scrunch before you change anything. Without a pre-change baseline, there's nothing to measure improvement against.

Longer answer: Here's how the process works:

1. Set your baseline first. Before you change anything, run your prompt set and record your brand presence, citations, AI agent traffic, AI referral traffic, etc. That's your control state.

2. Make one change at a time if you can. AI search attribution is genuinely hard—there are a lot of variables at play. Isolating them makes it much easier to draw conclusions.

3. Give it time. AI models update their indices on different schedules. Give changes at least 2-3 weeks to show up in your metrics before drawing conclusions.

4. Look for corroboration across signals. If your brand presence rate increases and citations from a newly optimized page also increase, that's stronger evidence of impact than presence rate movement alone.

Scrunch's Site Maps feature makes prioritizing improvement opportunities and tracking the results a lot easier. You can use it to identify underperforming pages, make fixes, and see how performance improves over time, page by page.

That end-to-end visibility, from "this page has an issue" to "we fixed it" to "here's how our AI presence improved" is something we find most teams are hungry for when they’re first getting started in AI search.

This isn't every question our team fields about AI search monitoring, but it covers a lot of conversational ground.

Got more questions? See our FAQs.

Want to dig deeper? Check out our AI search guide.

Ready to see for yourself? Get in touch or take Scrunch for a test drive.

Get started with Scrunch

Start reaching more customers on AI platforms. Spin up a free account or see Scrunch in action today.