Nothing lasts forever—AI citations included.

Citations shape AI answers and provide a direct link from said answer to your brand and products. Naturally, businesses want to be the source that AI links out to.

Securing a citation (or placement in a cited source) is one thing. We’ve written a lot about how to go about it and built partnerships with companies like Noble and Stacker to help our customers do it at scale.

But we also wanted to understand what comes after. Namely, what brands need to do to hang on to a citation.

In partnership with the team at Stacker, we analyzed 3.5 million citation events across AI platforms from September 2025 to March 2026.

For each source, we tracked cohorts—groups of sources that appeared in a given week—and measured how many were still being cited in subsequent weeks.

From that survival curve, we calculated a half-life: the number of weeks it takes for 50% of a cohort’s citations to disappear.

You can dig into our full findings below.

Our primary discovery? For the average source in our dataset, citation activity drops by half in about 4–5 weeks.

But “average” masks a lot. Which AI platform is doing the citing has a major impact, as does the type of source.

The main takeaway for brands: Solid citation strategy requires playing both offense and defense.

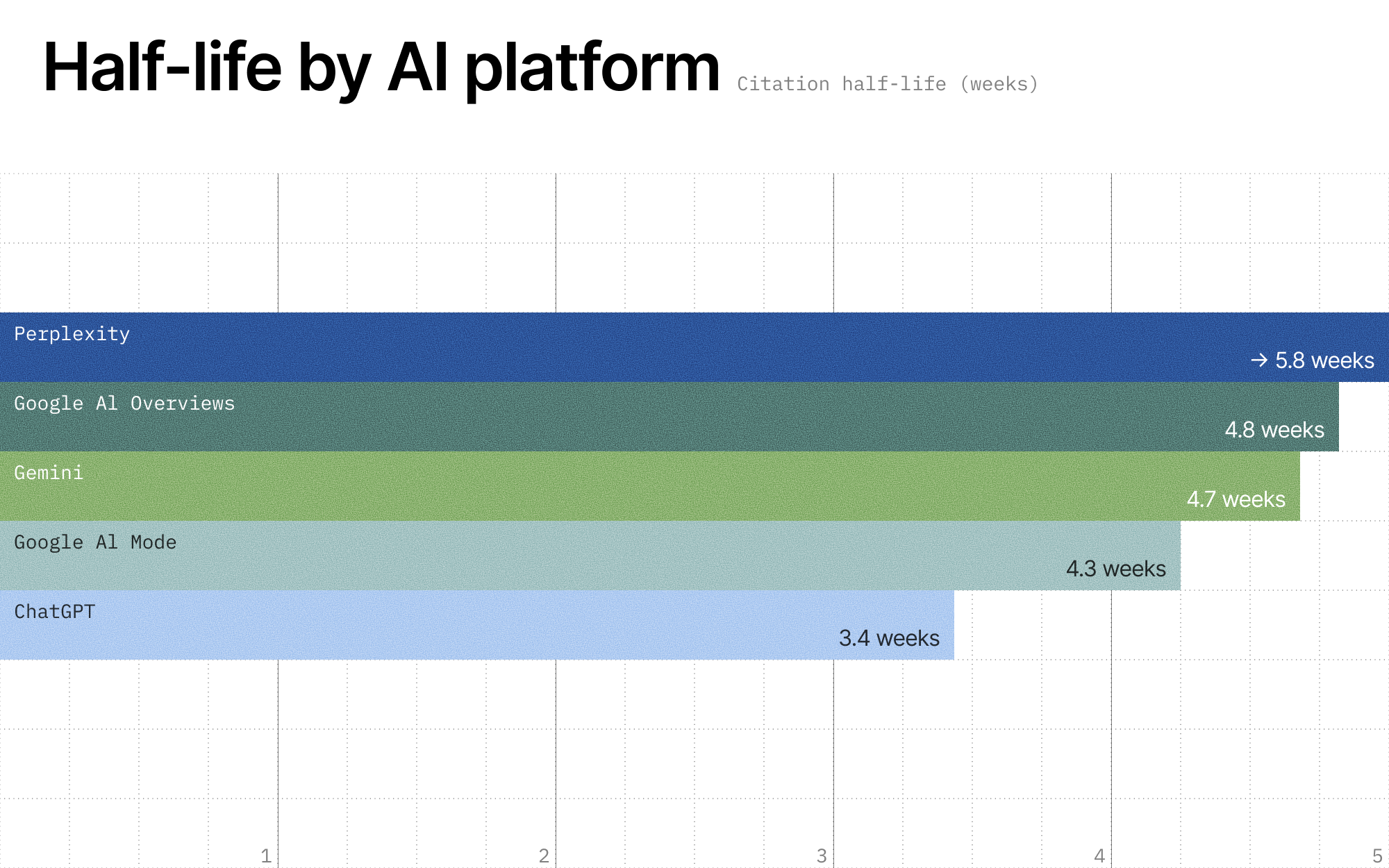

Platform plays a major role in citation durability

Across all sources cited by AI platforms, citation activity drops by half in roughly 4.5 weeks.

That’s a meaningful window, but also a fast-moving one. Without ongoing content effort, many AI citations are effectively ephemeral, cycling through every month or so.

But this baseline obscures real variation across platforms and, to a lesser degree, industries.

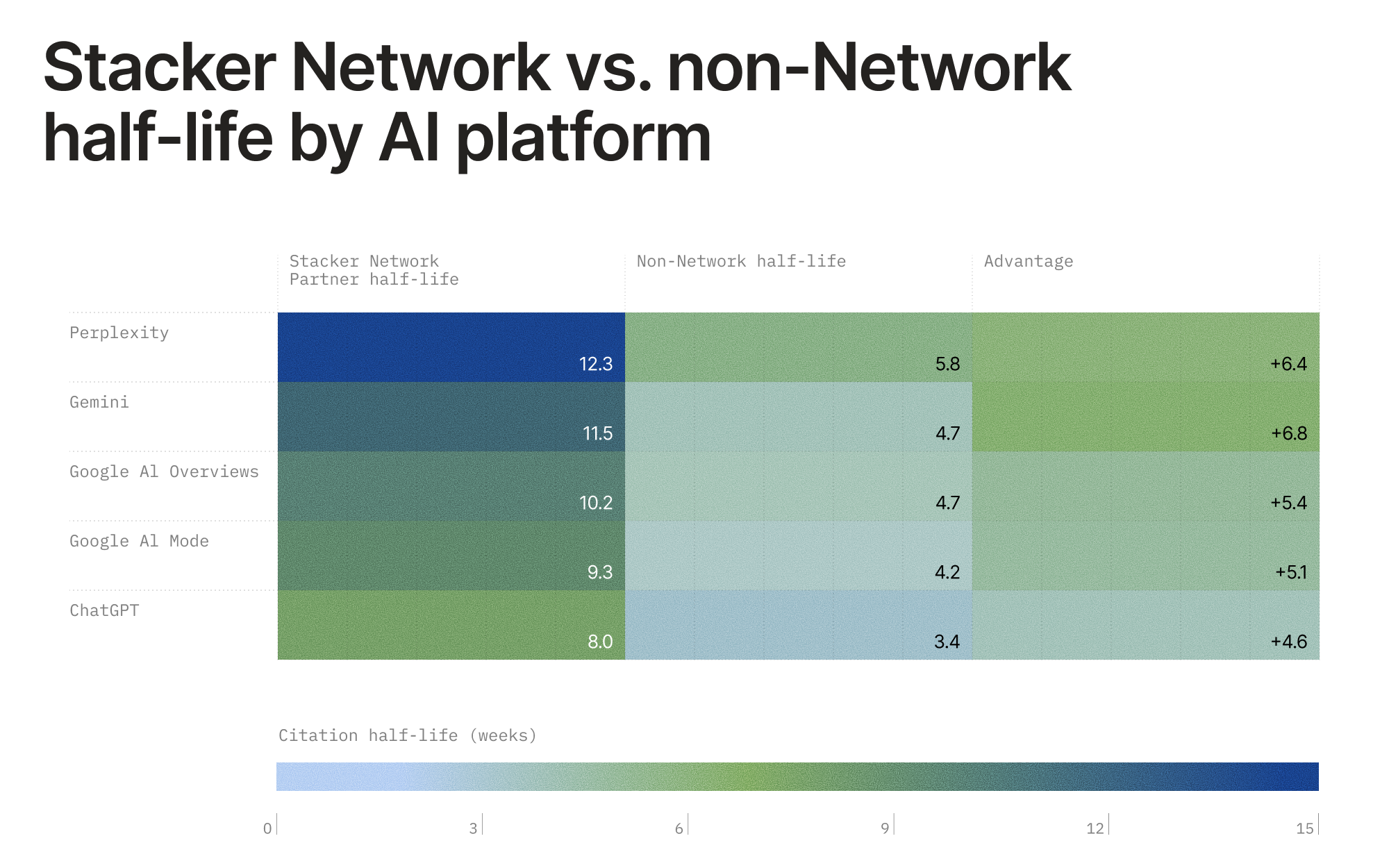

ChatGPT cycles through sources the fastest (3.4 weeks). Meanwhile, Perplexity citations last nearly 70% longer than ChatGPT citations (5.8 weeks).

Google’s three surfaces—AI Mode, Gemini, and AI Overviews—cluster together in the mid-range (4.3–4.8 weeks), suggesting a relatively consistent citation refresh cycle across Google’s AI ecosystem.

For brands investing in AI search visibility, this means the platform you’re optimizing for significantly affects how often you need to “re-earn” citations.

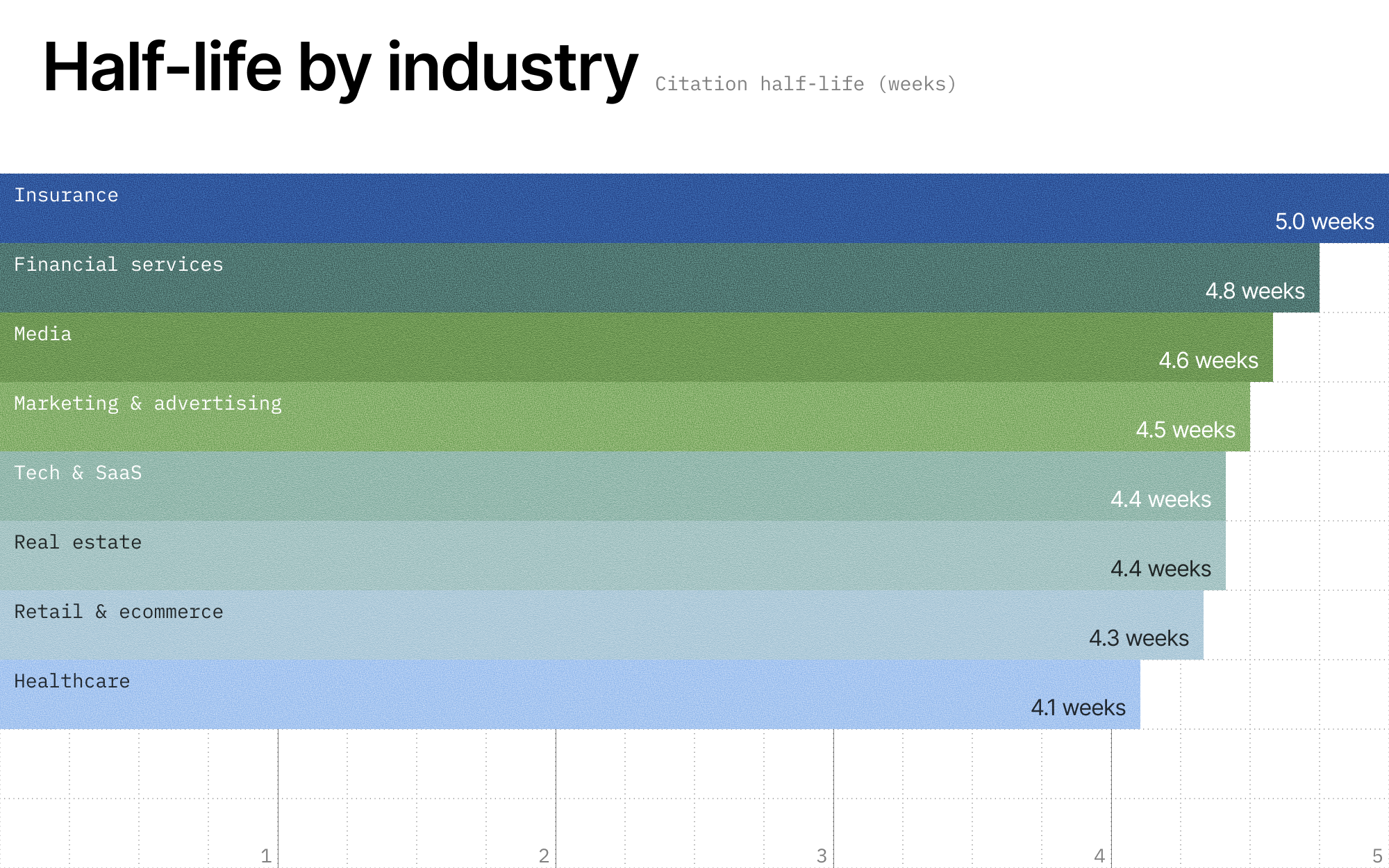

Industry differences are real but narrower—what platform you’re cited on ultimately matters more than what industry you’re in.

Insurance (5 weeks) and financial services (4.8 weeks) sources are the stickiest, likely reflecting the authoritative, evergreen nature of content in those verticals.

Healthcare (4.1 weeks) and retail & ecommerce (4.3 weeks) sources turn over the fastest—categories where timeliness and recency may drive more frequent source rotation by AI platforms.

The key implication: Platform strategy has more leverage than vertical when it comes to citation durability. A healthcare brand on Perplexity (5.8 weeks) still outperforms an insurance brand on ChatGPT (3.4 weeks).

Some sources have longer shelf lives

We conducted our research in partnership with the team at Stacker, in part to understand what role content syndication via editorial sources (i.e., news outlets) may play in citation durability.

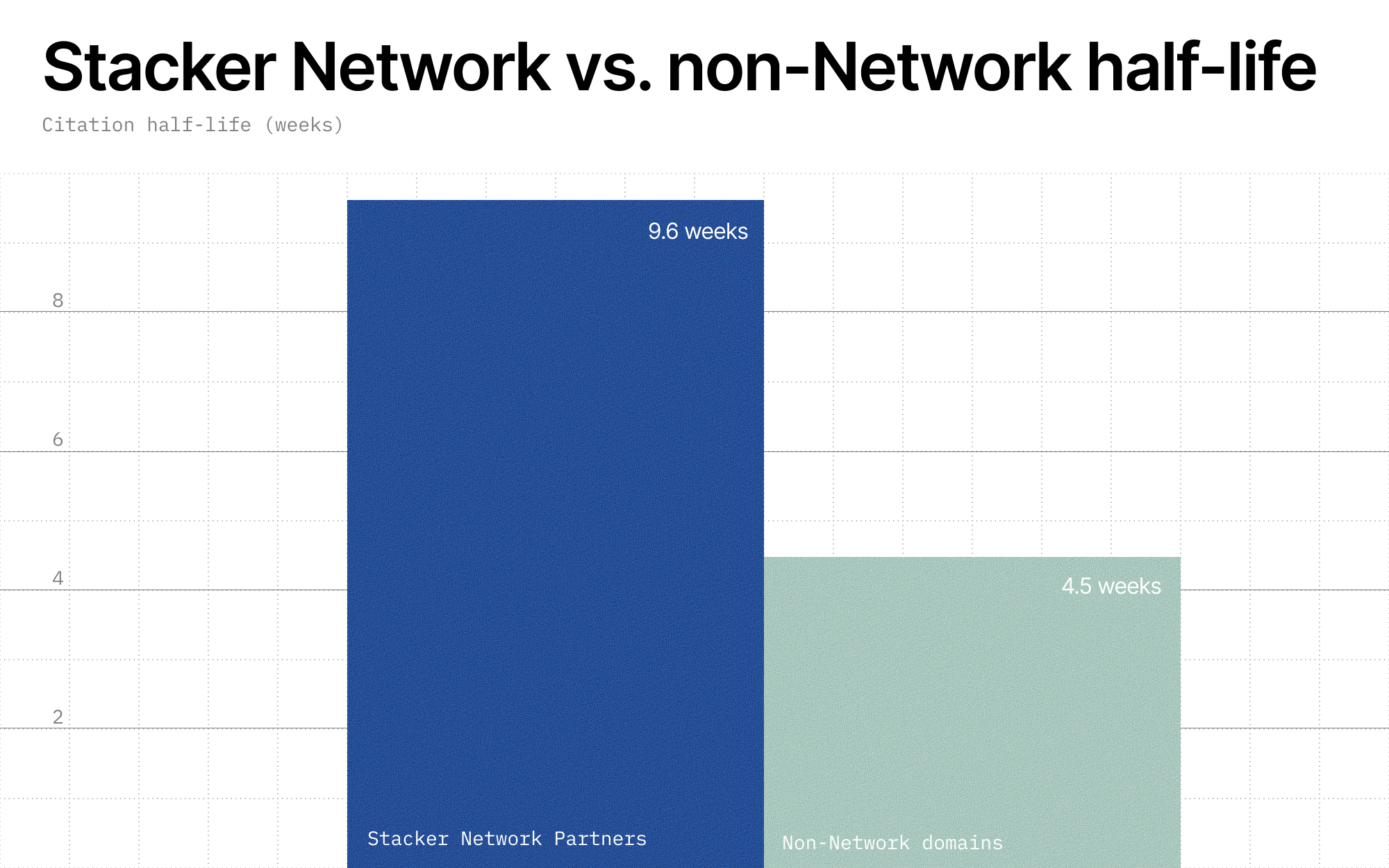

Our finding? Citations from domains in the Stacker Partner Network—a curated network of more than 4,000 news publishers—last twice as long as the average non-Network domain.

This is not a marginal difference. It represents a compounding structural advantage: While a typical source needs to re-earn its AI citations every month, partner-associated domains showed materially longer estimated half-lives.

The composition of Stacker Network Partner domains is 100% editorial. While we can't make the claim for sure, our research indicates that news sources are more durable citation sources.

The durability gap between partner and non-partner sources is present across all AI platforms, with no exceptions.

Stacker Network Partner domains on Perplexity and Gemini see the largest absolute advantages—over 6.5 additional weeks of citation durability. Even on ChatGPT (the lowest-durability platform), partner sources last 2.4x longer than non-Network domains.

The Perplexity-partner combination is the strongest in our dataset: 12.3 weeks—more than 3x the average non-Network ChatGPT citation and over twice the average non-Network Perplexity citation.

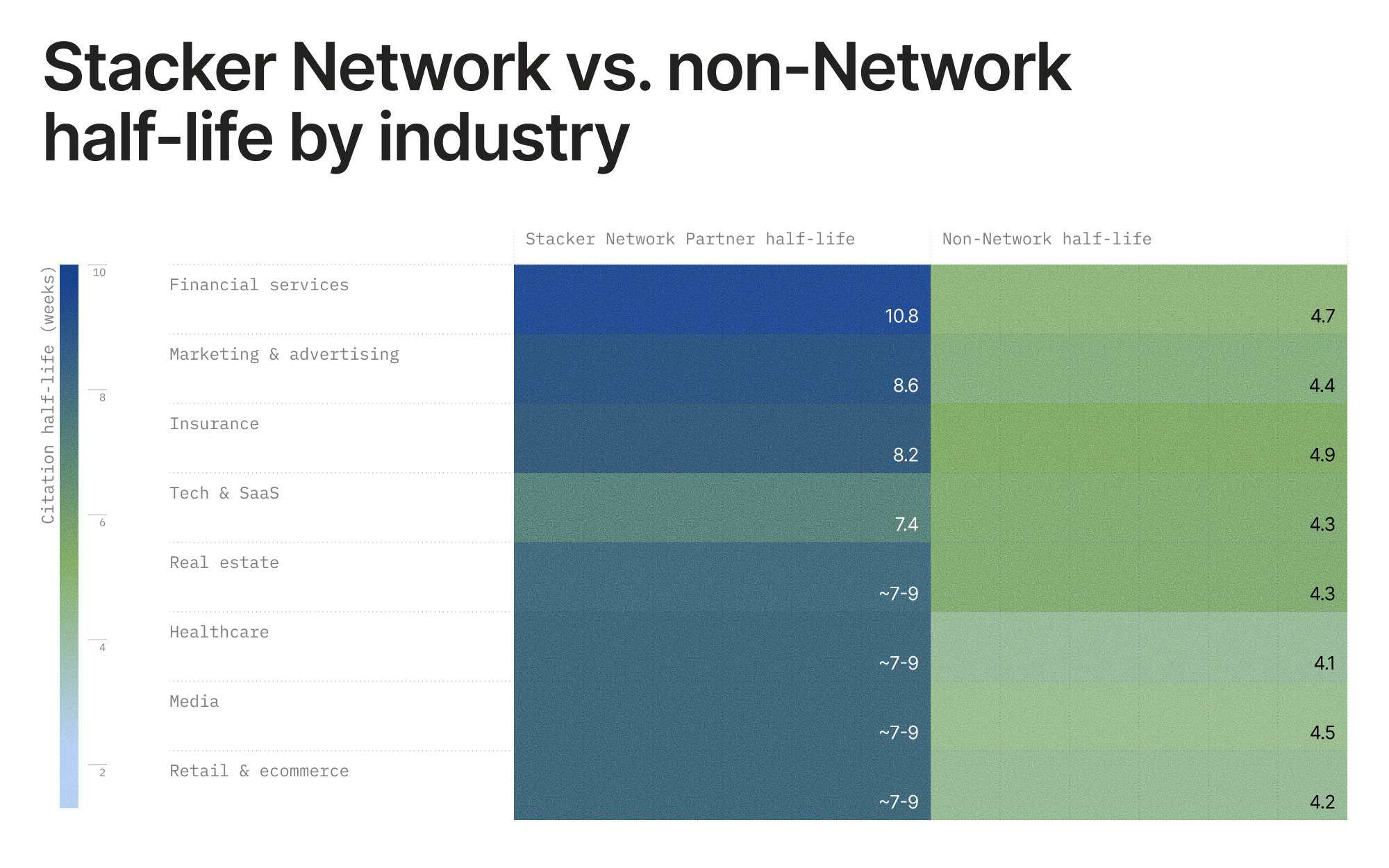

The durability gap is consistent across all industry verticals as well.

Partner cells in some verticals have wider confidence intervals due to smaller sample sizes, but directional advantage is consistent, with financial services partner sites being the stickiest in the entire dataset at 10.8 weeks.

Earning citations is only step one

A few TLDR takeaways for marketing and growth teams:

- ~4.5 weeks is the effective “refresh window” for average AI citations. Content strategies should account for this cadence.

- ChatGPT has the fastest turnover—brands targeting ChatGPT visibility need a more aggressive and frequent content refresh strategy.

- Perplexity is the highest-durability platform—if you’re cited there, those citations compound over time more than anywhere else.

- Industry matters at the margin. Insurance and financial services sources have a natural durability edge; healthcare and retail & ecommerce sources need to work harder to maintain sustained visibility.

- Leveraging a technology and service like Stacker can provide a powerful advantage. While competitors are cycling out every 4–5 weeks, you’re staying in the mix for nearly 10. That’s fewer resources spent re-earning visibility and more sustained presence in AI-generated responses.

Here’s what brands should do to earn and retain citations:

Monitor citation performance routinely

The citations you have today can disappear tomorrow. Keeping tabs on your citation share isn't a quarterly exercise—it's an ongoing one.

That means knowing which sources are being cited for the prompts that matter most to your business, tracking when those citations shift, and acting quickly when a must-win source gets displaced.

Prioritize citation acquisition and retention

Whether your goal is earning placement in a dominant source or beating it outright, start by understanding which sources actually drive AI responses in your category.

Tools like Scrunch’s Influence Score makes this straightforward: It surfaces which citation sources to prioritize by multiplying the percentage of AI responses that have cited a source by the unique number of prompts. High Influence Score? That's your target.

Balance durability and demand

A source that holds its AI citation for a dozen weeks is only valuable if someone's actually typing that prompt into an AI platform. Durability without demand is like a billboard on a road no one drives.

Prompt monitoring tells you how long you're holding citations and when your position shifts, but visibility into AI search trends tells you which topics are actually gaining traction. Pull back the lid on both.

Regularly update and optimize content

It’s safe to assume that recency matters in terms of AI citations, but it’s tough to say exactly how much without follow-up research.

That doesn’t mean publishing more content is necessarily the fix. Instead, it's making what you already have more authoritative and more useful to AI. Revisit your highest-traffic pages, fill in gaps, and sharpen your answers. The goal is content that AI platforms don't just find, but keep coming back to.

Make citing your content as easy as possible

If AI can't read your content, it can't cite it. It's that simple.

Technical issues—bot accessibility blocks, JavaScript rendering, poor page structures—create invisible barriers between your content and the AI platforms your buyers are using. Auditing your site for these problems should be a prerequisite.

Beyond fixing what's broken, you can go further: Optimize your content for how AI actually consumes it, then deliver it directly to AI user agents via technology like Scrunch’s Agent Experience Platform (AXP).

Instead of hoping AI finds the right content on your page, AXP ensures it does by automatically serving an AI-optimized experience to agent traffic without touching the human experience.

Scale citation reach with tools like Stacker

Our research shows a clear pattern: Citations from trusted editorial sources last longer. Partner domains in the Stacker Partner Network held citations for twice as long as the average non-Network domain, and the advantage held across every platform and industry we analyzed.

That durability gap is hard to close through owned content alone. That's where a tool like Stacker comes in, turning your content into earned placements across a network of vetted publishers.

Citations aren't a one-time win—and not all citations are created equal.

Earn them, keep them, and make sure the right sources are working in your favor.

Power your citation strategy with Scrunch

Take action on citation shifts. Start a 7-day free trial or get in touch to see how Scrunch can help you win and keep more AI citations.

A quick note on our methodology:

To make these estimates stable enough to compare across platforms, industries, and beyond, we:

- Tracked cohorts of cited sources over time and measured how quickly those cohorts decayed week by week

- Smoothed short-term volatility so one noisy week didn’t distort the overall pattern

- Stabilized smaller groups by partially pooling them toward related groups when sample sizes were thin

- Used 200 bootstrap resamples to estimate confidence intervals

- Compared multiple model variants against holdout data and published the best-performing approach

Worth remembering: These estimates reflect aggregate citation persistence across many prompts and weeks, not what happens when you rerun one exact prompt over and over again.