How accurately can Scrunch detect JavaScript or rendering issues that block AI crawlers?

- Also asked as:

- Does Scrunch detect JavaScript content hidden from AI bots?

- How does Scrunch identify rendering issues that block AI?

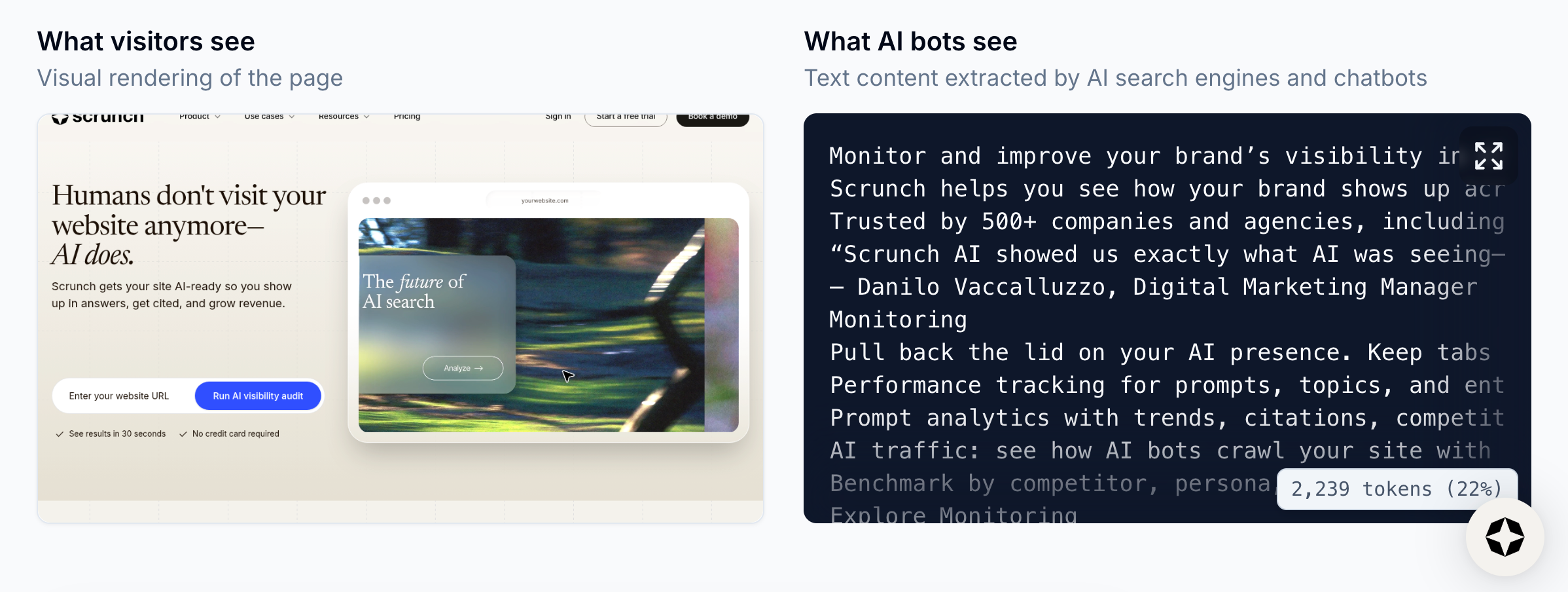

Scrunch detects JavaScript or rendering issues that block AI crawlers very accurately because its site auditing feature compares the “human” view versus the “AI” view. It flags pages where key text is only available via JavaScript, and it also checks whether a page can deliver meaningful content without JavaScript.

Additional context: The human view is browser-rendered and JavaScript-enabled. The AI view is what an AI bot can read without reliably executing JavaScript. If Scrunch can't see key content during a crawl, neither can most AI bots.

Example

For example, imagine a Scrunch user runs a site audit on a JavaScript-heavy product page that rarely gets cited.

The audit identifies whether there is meaningful content on the page that can be delivered without JavaScript.

It also checks whether the page successfully returned prerendered content when fetched by a bot.

Scrunch then provides the user with specific results so they can fix any JavaScript or rendering issues.

Follow-up question: How do I fix JavaScript and rendering issues?

There are two ways to fix JavaScript and rendering issues:

- Fix at the source: Implement server-side rendering or prerendering for Single Page Applications and reduce JavaScript dependencies for critical content. This requires engineering resources.

- Enable AXP: Scrunch’s Agent Experience Platform (AXP) delivers a clean HTML version to AI bots at the CDN level—no code changes and no impact on the human site experience.

Related FAQs

What methods does Scrunch use to collect data from AI platforms?

Scrunch uses multiple methodologies to collect prompt data from AI platforms like ChatGPT, Perplexity, Google AI Overviews, and others, including browser automation and official platform APIs.

How do I create and use customer personas in Scrunch?

Scrunch allows users to create customer personas based on unique characteristics and geographies and either auto-generate prompts based on those personas or assign personas to existing prompts for targeted tracking and filtering.

Which AI platforms and LLMs can Scrunch track and monitor?

Scrunch currently supports nine major AI platforms: ChatGPT, Claude, Gemini, Perplexity, Google AI Mode, Google AI Overviews, Meta AI, Microsoft Copilot, and Grok.